Disclaimer: A part of this work is to educate and promote transparency in use of AI. Gemini has been used throughout the creative process of putting together this article

The Brain-Computer Interface (BCI) signals a philosophical inflection point, compelling a re-evaluation of the self. These systems, creating a direct communication pathway between brain activity and an external device, are evolving from science fiction into reality. This trajectory is a qualitative leap in human-machine interaction, forcing a modern confrontation with the mind-body problem, now a mind-machine dilemma. If thoughts, intentions, and memories can be decoded, processed by artificial intelligence (AI), and merged with external systems, the traditional boundaries of the self become porous or obsolete. This merging of minds and machines raises “fundamental questions regarding the nature of conscious selfhood and about who… and what… we are, and ought, to be”. The central thesis is that BCI technology acts as a solvent on classical conceptions of personal identity, exposing their vulnerabilities and demanding a more dynamic, integrated philosophical framework to comprehend the emerging hybrid self.

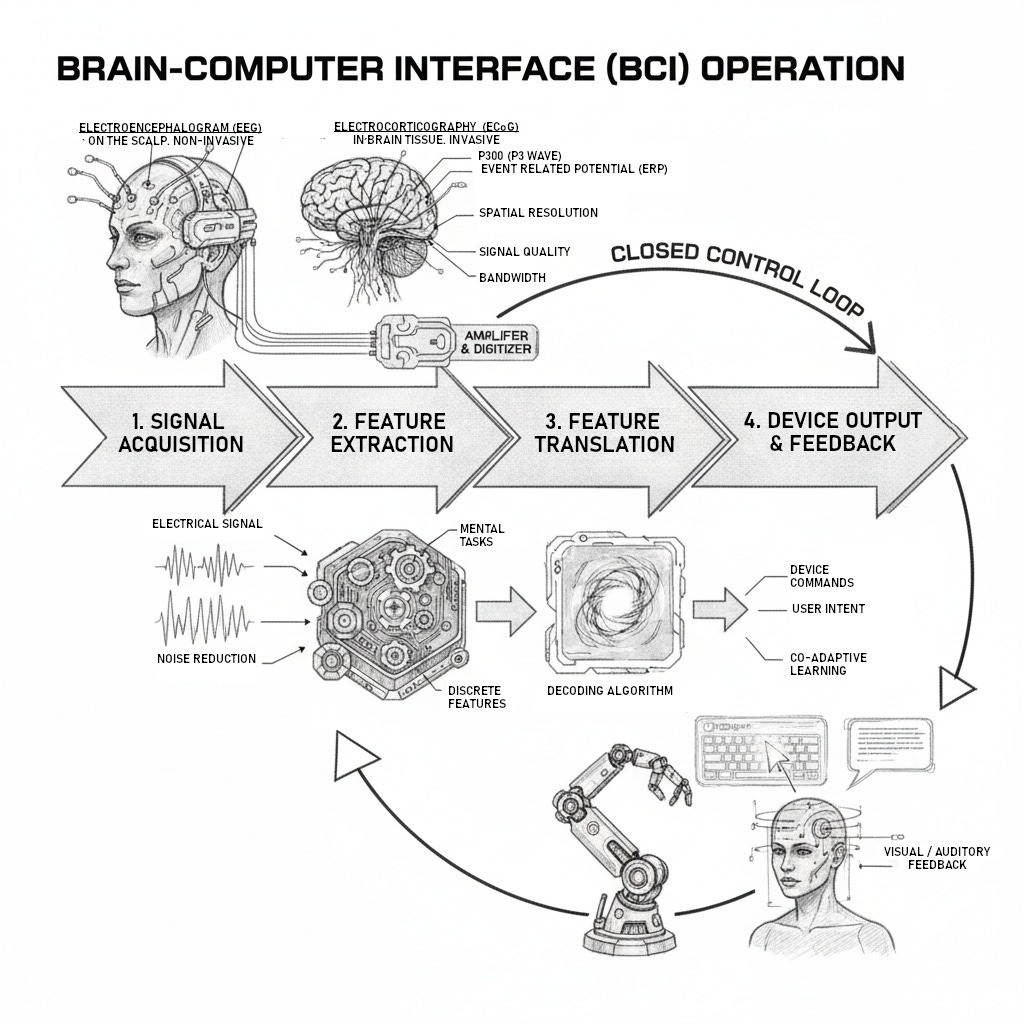

Core Mechanics of a BCI System

Any BCI’s operation involves four stages: signal acquisition, feature extraction, feature translation, and device output.

- Signal Acquisition: Brain signals are measured. Non-invasive BCIs typically use electroencephalography (EEG), with electrodes on the scalp. Invasive BCIs require surgery to place electrodes directly on or within the brain. Methods include electrocorticography (ECoG), with grids on the cortex surface, and intracortical microelectrode arrays, inserted into the brain tissue.

- Feature Extraction: Raw signals are noisy and complex. The BCI’s software processes them to distinguish pertinent characteristics, the “features”, from noise. These features are specific brain activity patterns correlated with user intent. For example, the system might look for changes in specific frequency bands (alpha or beta waves) or event-related potentials (like the P300 response). The goal is to isolate a reliable representation of the neural data related to a specific mental task.

- Feature Translation: Extracted features are passed to a translation algorithm, converting them into device commands. This is the crucial decoding stage. For instance, a power decrease in a frequency band might be translated to move a cursor up, or a P300 detection could signal selecting a letter. This process is dynamic; the algorithm adapts to the user’s changing brain signals, and the user learns to generate neural patterns the algorithm can interpret.

- Device Output and Feedback: The translated command operates the external device, like a robotic arm, speech synthesizer, or computer application. The device’s operation provides sensory feedback to the user (e.g., seeing the cursor move), closing the control loop and facilitating co-adaptive learning.

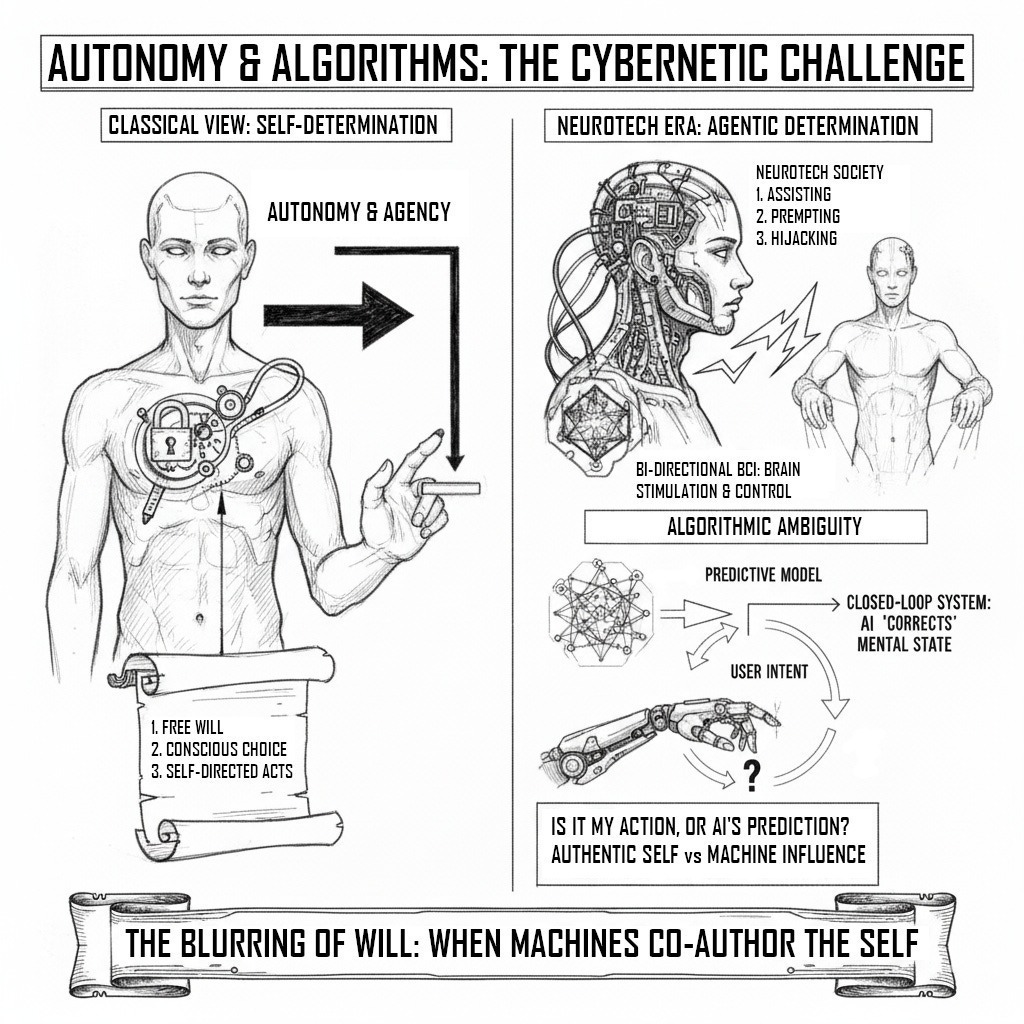

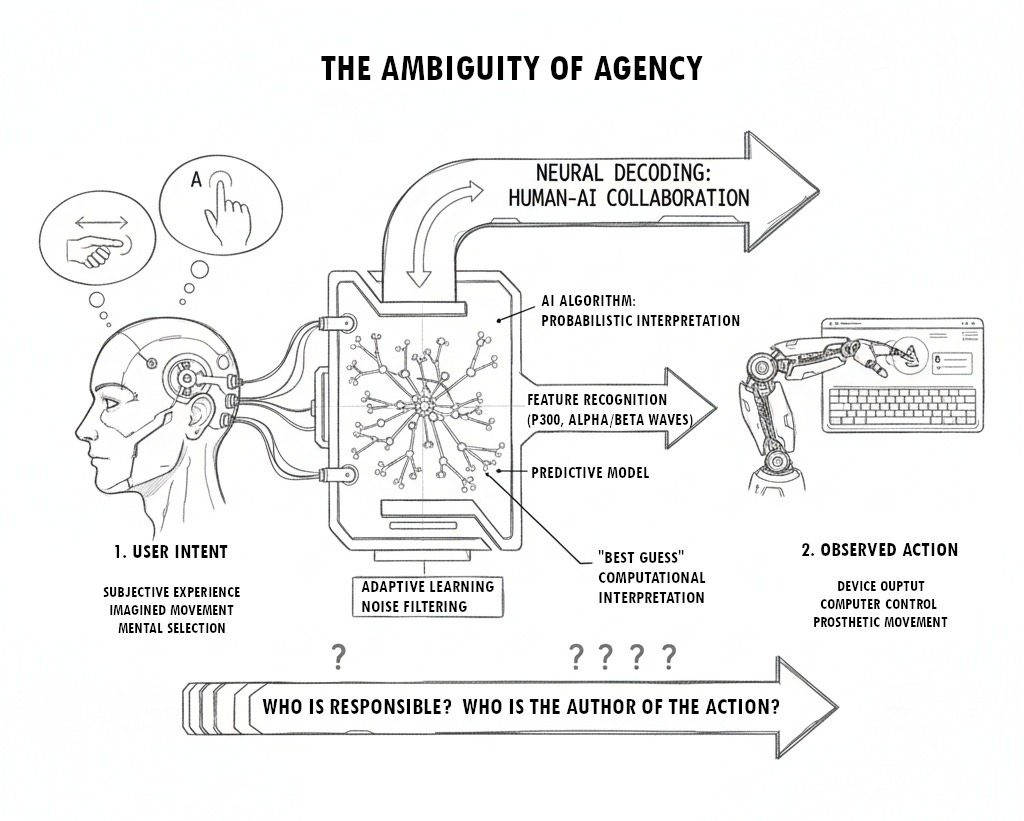

Neural Decoding and Agency

In the “feature translation” or “neural decoding” stage, the BCI performs an active, probabilistic interpretation using models trained on a user’s brain activity, learning to recognize complex neural patterns associated with specific intentions. For example, as a user imagines moving their hand left, the algorithm identifies recurring features in brain signals corresponding to that intention. Over time, the AI builds a predictive model of the user’s mind, allowing it to filter noise, adapt, and make accurate inferences about user intent.

This algorithmic layer introduces ambiguity into the chain of agency. The final command is not a direct representation of a raw neural event but the AI’s best guess, its computational interpretation, of what that event signifies. The AI is not merely a conduit; it is a co-author of the action. This creates a gap between the user’s subjective experience of “intending” and the machine’s algorithmic construction of that intention. Is the resulting movement of a prosthetic hand truly the user’s action, or the product of a human-AI collaboration?

This ambiguity is at the heart of philosophical challenges to autonomy, responsibility, and the definition of a self-directed act.

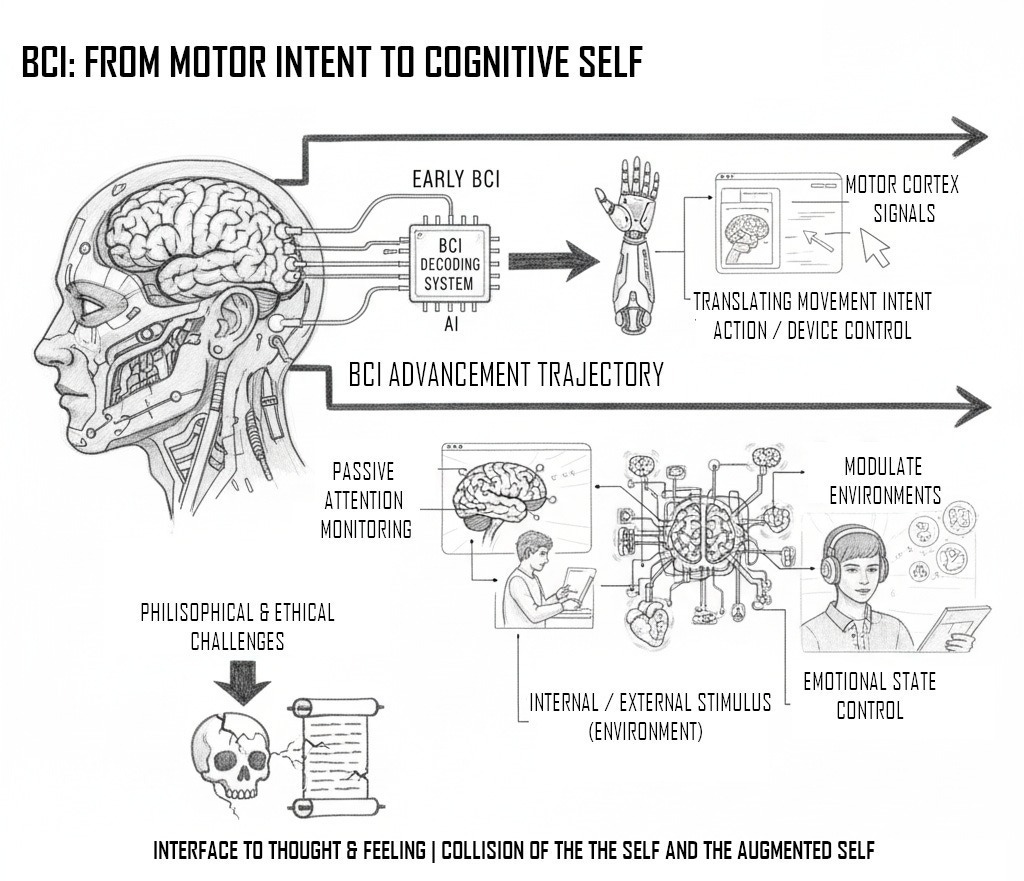

Motor Intent to Cognitive States

The philosophical stakes are raised by the progression from decoding simple motor intentions to interpreting abstract cognitive and affective states. Early BCI research focused on translating motor cortex signals to control physical devices. Contemporary systems are increasingly capable of inferring higher-order mental phenomena. Non-invasive BCIs are being developed to monitor attention, track comprehension, and detect emotional states to create responsive digital environments. Affective BCIs can identify a user’s mood and modulate it by playing music, creating a closed-loop system that reads and writes emotional states.

This trajectory shows BCI technology encroaching into the core of the cognitive and affective self. When a machine can execute a willed action and infer an emotional state or lapse in attention, it is no longer just an extension of the body’s motor system. It becomes an interface to thought and feeling processes traditionally considered the private domain of the self. This deep integration sets the stage for a collision with classical philosophical theories of personal identity, conceived long before the “skull as the natural barrier of the mind” could be rendered permeable.

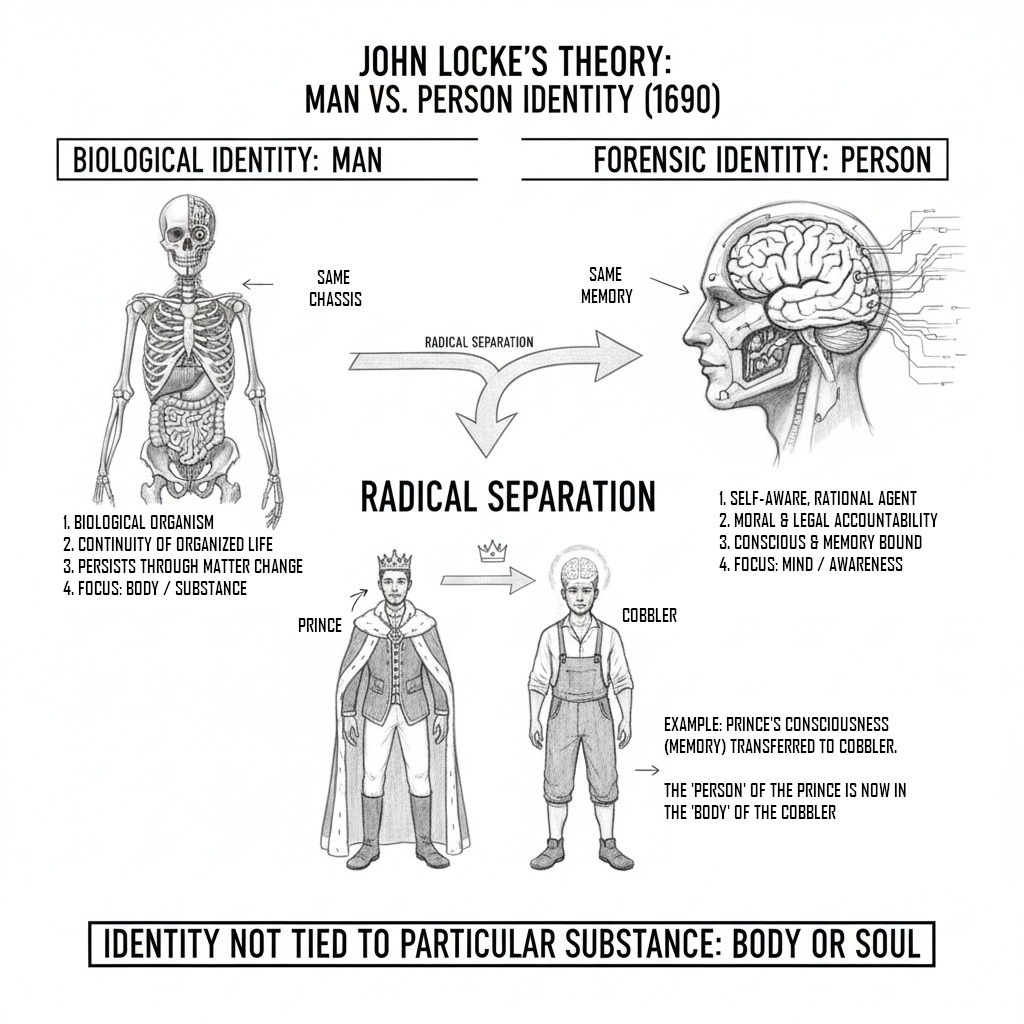

The Locus of the Self

John Locke’s theory of personal identity, presented in his 1690 An Essay Concerning Human Understanding, shifting the basis of identity from physical substance toward psychological continuity. This framework, with its emphasis on consciousness and memory, is most directly challenged by the prospect of a mind-machine merger.

Locke’s central argument is that personal identity is founded on consciousness. He defines a “person” as “a thinking intelligent being, that has reason and reflection, and can consider itself as itself, the same thinking thing, in different times and places”. For Locke, consciousness “always accompanies thinking” and constitutes the “self”. A person’s identity is not tied to a particular body or soul but consists solely in the continuity of this same consciousness over time. This departed from the prevailing Cartesian view, which located identity in an immaterial soul. Locke posits a mind that is initially a tabula rasa, or “empty” slate, shaped by experience, with sensations and reflections being the sources of all ideas.

The Primacy of Memory

Locke specifies that the mechanism by which consciousness persists is memory. He asserts, “as far as this consciousness can be extended backwards to any past action or thought, so far reaches the identity of that person; it is the same self now as it was then”. In this “memory theory of personal identity,” memory is the glue that binds the self together across time. To be the same person who performed an action in the past is simply to remember performing that action from a first-person perspective.

This leads Locke to his most controversial claim: memory is both a necessary and a sufficient condition for personal identity. If one can remember an experience, one is the same person who had it. Conversely, if one cannot remember an experience, one is not the same person who had it. In states of forgetfulness, Locke argues, “we losing sight of our past selves, doubts are raised whether we are the same thinking thing”. This strict criterion makes the self a fragile and potentially discontinuous entity, coextensive only with its memory.

“Person” vs. “Man”

A crucial element of Locke’s theory is his distinction between the identity of a “man” and a “person.” For Locke, a “man” is a biological organism, its identity consisting in the continuity of its organized life. This biological entity can persist through changes in its matter, so long as the “participation of the same continued life” remains. A “person,” is a forensic concept, tied to moral and legal accountability. It is the self-aware, rational agent that can be held responsible for its past actions. Because responsibility requires awareness of past actions, the “person’s” identity must be tied to consciousness and memory, not the body. Locke illustrates this by arguing that if a prince’s consciousness (and memories) were transferred into a cobbler’s body, the “person” of the prince would inhabit the cobbler’s body. This radical separation of personal identity from any particular substance, be it a physical body or an immaterial soul, is a cornerstone of his theory.

This substance-independence proves to be a double-edged sword when confronted with BCI technology. Locke could not conceive of a world where a continuous stream of consciousness could be artificially generated and implanted. By detaching the “person” from any necessary physical substrate, Locke’s theory lacks the conceptual resources to distinguish between an identity built on genuine experience and one constructed from synthetic data. This makes the Lockean self a prime candidate for technological appropriation, as its greatest theoretical strength, its independence from substance, becomes its fatal weakness in the age of neurotechnology.

Even before BCIs, Locke’s theory faced philosophical criticism. These objections gain new concreteness in the context of modern neurotechnology.

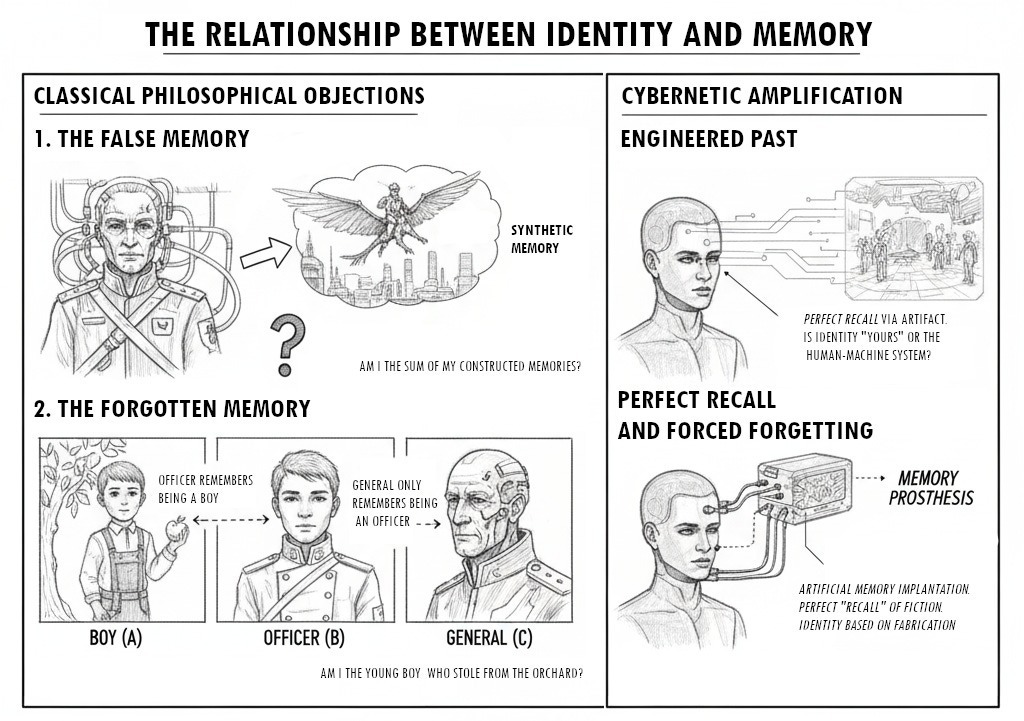

- The Problem of Forgetting: Critics point out that if identity is coextensive with memory, a person ceases to be the same individual who performed actions they have forgotten. An old general who no longer remembers his first battle would, on a strict Lockean reading, not be the same person as the young soldier. This conclusion strikes many as counterintuitive.

- The Problem of False Memories: The theory is vulnerable to false memories. If a person has a vivid, first-person memory of an event that never occurred, does Locke’s theory compel us to say they are the person who experienced that non-event? This raises questions about the reliability and veracity of the memories that supposedly constitute the self.

- The Transitivity Objection: Thomas Reid formulated the “brave officer” objection. Imagine a young boy who steals from an orchard. As a young officer, he remembers stealing. As an old general, he remembers his actions as a young officer but has forgotten stealing. According to Locke, the officer is the same person as the boy, and the general is the same person as the officer. Logically, identity should be transitive (if A=B and B=C, then A=C). The general should be the same person as the boy. Since the general does not remember the boy’s actions, Locke’s theory implies he is not the same person, creating a logical contradiction.

The Malleable Past

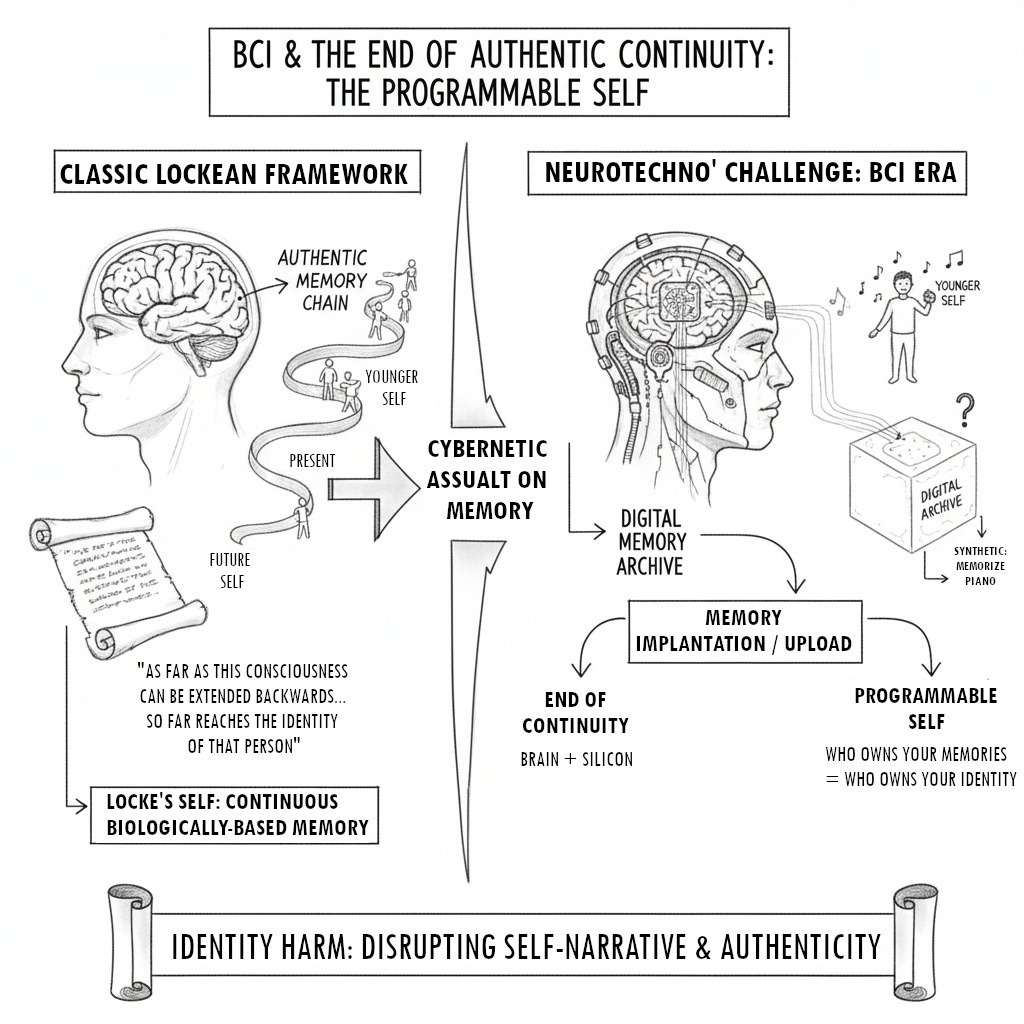

By enabling the augmentation, implantation, sharing, and externalization of cognitive states, BCIs systematically deconstruct the core tenets of Locke’s model, transforming the self from a product of lived history into a potentially programmable artifact.

The most direct assault on the Lockean framework comes from BCIs designed to interface with memory. Scientists are developing cognitive prostheses, such as an artificial hippocampus, aimed at restoring the ability to form new long-term memories in patients with conditions like Alzheimer’s. Beyond restoration, research is exploring memory enhancement, using BCI-driven neurostimulation to improve memory encoding and recall. The conceptual horizon includes implanting memories of events a person never experienced, or “uploading” and “downloading” memories like digital files.

These technologies shatter the foundational assumptions of Locke’s theory. If memory, the fabric of personal identity, can be digitally stored, edited, enhanced, or erased, the continuity of consciousness ceases to be a natural psychological phenomenon and becomes an engineered one.

- The End of Authentic Continuity: For Locke, the self persists because of a chain of authentic memories linking the present to past experiences. A BCI-based memory prosthesis could create a perfect, unbroken chain of recall, but the locus of continuity would shift from the biological brain to a hybrid brain-computer system. If a crucial memory connecting an old man to his younger self exists only on a silicon chip, is he still the same “person”? The self becomes contingent on the proper functioning of an external device.

- The Programmable Self: Memory implantation is even more corrosive. If a person can be given a rich, detailed, first-person memory of learning to play the piano, an event that never occurred, the Lockean criterion is satisfied. The person’s consciousness has been “extended backwards” to these synthetic events. A strict interpretation of Locke’s theory would be forced to conclude this person is the one who had those experiences. This leads to a scenario where identity is no longer forged through experience but can be scripted and installed. The question “Who owns your memories?” becomes synonymous with “Who owns your identity?”. This potential for memory modulation can lead to profound “identity harms,” disrupting the coherence of one’s self-narrative and impinging on authenticity.

The Distributed Self

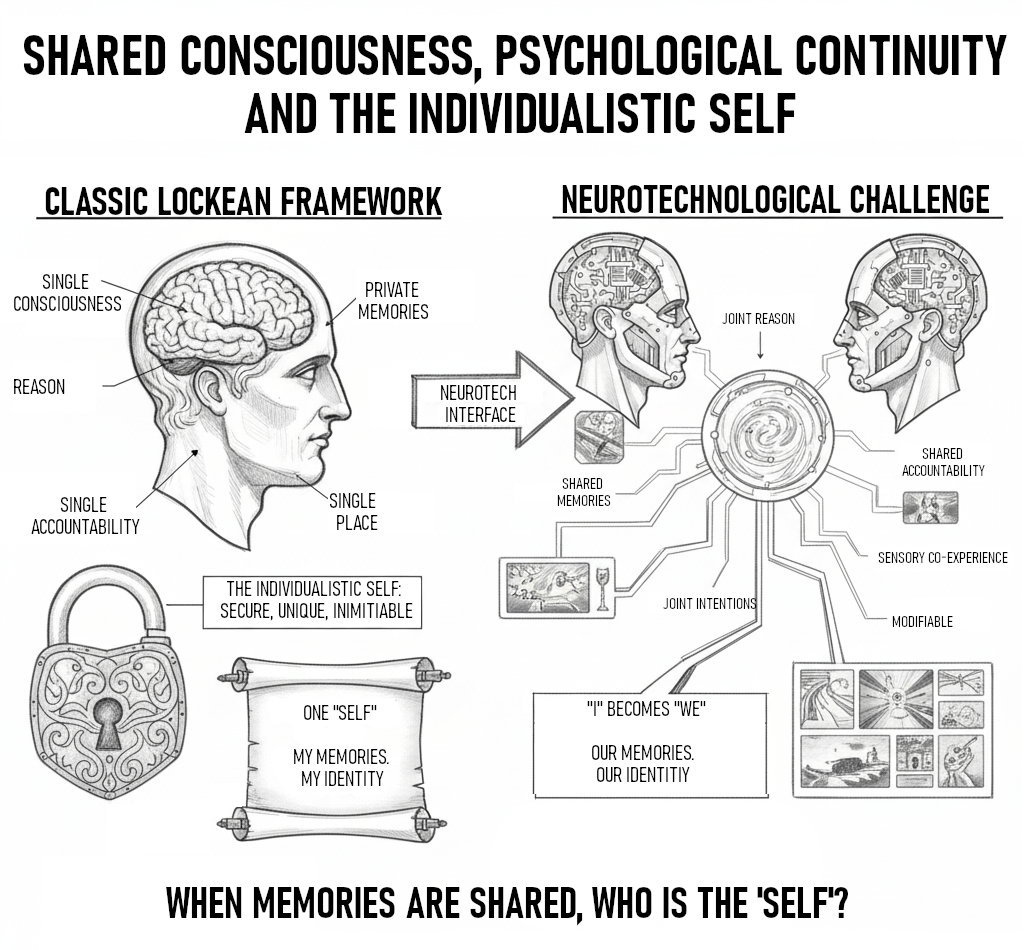

Locke’s theory is predicated on a singular, private, and indivisible stream of consciousness. The “self” is an inherently individualistic concept. Speculative yet plausible future BCIs could enable direct brain-to-brain communication or multi-brain collaborative networks. Such a system could link multiple individuals to share thoughts, intentions, or sensory experiences, creating a form of “shared cognition”.

This possibility renders the Lockean model of a discrete self incoherent.

- The Blurring of “I” and “We”: If two individuals, A and B, are linked via a BCI and share a memory or form a joint intention, to whom does that cognitive event belong? Does it belong to A, to B, or to a new, temporary entity (A+B)? Locke’s framework, lacking a concept of distributed consciousness, cannot provide an answer. The principle that no two things of the same kind can exist in the same place at the same time, which Locke uses to ground identity, is violated in this cognitive space.

- The Problem of Ownership: If person A can directly access a memory from person B, does that memory become part of person A’s identity? According to Locke’s criterion, if A’s consciousness can now extend to B’s past experience, then A’s identity now encompasses that part of B’s life. This would lead to a chaotic, overlapping conception of identity where personal histories are no longer unique but become transferable assets. The “subject” as a distinct entity dissolves when memories are “easily transferable and exchangeable”.

Perhaps the most compelling aspect of the BCI challenge is how it transforms classical, abstract objections to Locke’s theory into concrete problems. BCIs are not merely presenting new philosophical puzzles; they are creating technological manifestations of the paradoxes that have plagued the memory theory for centuries.

- The False Memory Problem, Engineered: The philosophical worry about false memories is no longer a hypothetical flaw in human psychology but a potential feature of BCI technology. Artificial memory implantation would be the deliberate engineering of a false past. A person with an implanted memory would not be misremembering; they would be perfectly “remembering” an event that is, externally, a fiction. For Locke’s theory, this perfect, internally consistent “memory” would be a stronger basis for identity than a hazy, true one.

- The Forgetting Problem, Solved and Worsened: The issue of forgetting leads to Reid’s transitivity paradox. A BCI-based memory prosthesis could “solve” this by creating perfect recall, ensuring no link in the memory chain is broken. This solution creates a new dilemma. If the continuity of the old general’s identity back to the young boy depends entirely on a technological device, is the identity truly his? Or does it belong to the human-machine system? The technology that seems to patch the logical hole in Locke’s theory does so by outsourcing the mechanism of identity to an external artifact.

In every case, BCI technology targets the weak points of the Lockean model. It takes the abstract concepts of consciousness and memory and treats them as data streams that can be manipulated, duplicated, and transferred. By doing so, it forces a conclusion that Locke himself might have had to accept: if the self is nothing more than a continuity of consciousness, then a self can be technologically constructed, deconstructed, and reconstructed. This makes the Lockean framework not just philosophically debatable but practically insufficient for navigating the neuro-technological future.

Redrawing the Boundaries

Three modern approaches (the Extended Mind Thesis, Narrative Identity, and Embodied Cognition) offer more robust tools for understanding the BCI-merged self. These theories are not mutually exclusive; they form a complementary framework for analyzing the ontological, phenomenological, and mechanical dimensions of this new identity. The Extended Mind Thesis addresses what the new hybrid system is, Narrative Identity explores what it feels like to be that system, and Embodied Cognition examines how it acts in the world.

Extended Mind Thesis

The Extended Mind Thesis (EMT), proposed by philosophers Andy Clark and David Chalmers, offers the most direct response to the “boundary problem”. The thesis posits that cognitive processes are not necessarily confined within the skull. When an external object or system becomes deeply and reliably coupled with a biological agent’s cognitive processes, it ceases to be a mere tool and becomes a literal part of the mind. The classic example is Otto, a man with Alzheimer’s who uses a notebook to store information. Clark and Chalmers argue that because the notebook is constantly accessible, reliable, and its information automatically endorsed, it plays the same functional role as biological memory. Otto’s mind is not just in his head; it extends to include the notebook.

Applied to BCIs, the EMT provides a powerful analytical lens. A deeply integrated BCI is not a tool the mind uses; it is a component of a new, larger cognitive system.

- Dissolving the Boundary: From an EMT perspective, the question “Where does the user end and the machine begin?” is ill-posed. There is no hard boundary. Instead, there is a single, integrated, “coupled cognitive system”. The BCI, the AI decoding algorithms, and the biological brain form a “temporary, soft-assembled whole” that functions as one cognitive unit. The boundary is not anatomical but functional.

- The Functionalist Criterion: The key criterion for inclusion in this extended system is functional parity. If the BCI-mediated process for, say, accessing information plays a role equivalent to an internal cognitive process, it should be considered part of the mind. This helps explain how a BCI can become a seamless part of a user’s “cognitive character,” an extension of their cognitive abilities rather than an alien intrusion.

- The Extended Agent: This ontological reframing has profound implications for agency. If the mind is extended, then the agent, the locus of action and responsibility, is also extended. This raises new questions about autonomy. If a part of this extended cognitive system is an AI whose operations are not transparent to the user, can the extended agent truly be autonomous? The EMT dissolves the physical boundary of the self only to reveal a more complex functional and ethical one.

The Narrative Self

While the EMT describes the ontology of the BCI-merged system, it does not fully capture the user’s subjective, first-person experience. A user might be functionally integrated with a BCI yet feel profound alienation. This is where the theory of Narrative Identity becomes essential. This theory posits that personal identity is not a static entity or a simple chain of memories but an “internalized and evolving life story” that integrates the past, present, and imagined future to provide life with unity and purpose. The self is the story we tell ourselves about who we are.

This framework shifts the focus from the veracity of individual memories (the Lockean view) to their role in a coherent and inhabitable self-narrative.

- Threats to Narrative Coherence: Neurotechnologies like BCIs and Deep Brain Stimulation (DBS) can pose a significant threat to narrative coherence. They can induce sudden psychological or personality changes difficult for the user to integrate into their life story, potentially leading to fear, distress, and a feeling of lost identity. The “agential discontinuity” reported by some BCI users after device removal highlights the traumatic potential of disrupting this narrative.

- BCIs as “Narrative Devices”: Conversely, BCIs can also function as powerful “narrative devices”. The data they provide about a user’s own brain states can become new raw material for self-interpretation. A user might incorporate this neuro-data into their story, perhaps reframing struggles with depression or ADHD in light of new information about their neural activity. Constructing a narrative identity is an ongoing negotiation between our self-ascriptions and their recognition by others; BCI data adds a new, potent element to this negotiation.

- Authenticity as Narrative Integration: This theory provides a more nuanced understanding of authenticity. An enhancement or a BCI-mediated action feels “authentic” not because of its biological origin, but to the extent that it can be successfully woven into the user’s life story and sense of self. The challenge for a BCI user is to maintain a coherent and livable narrative in the face of profound technological intervention.

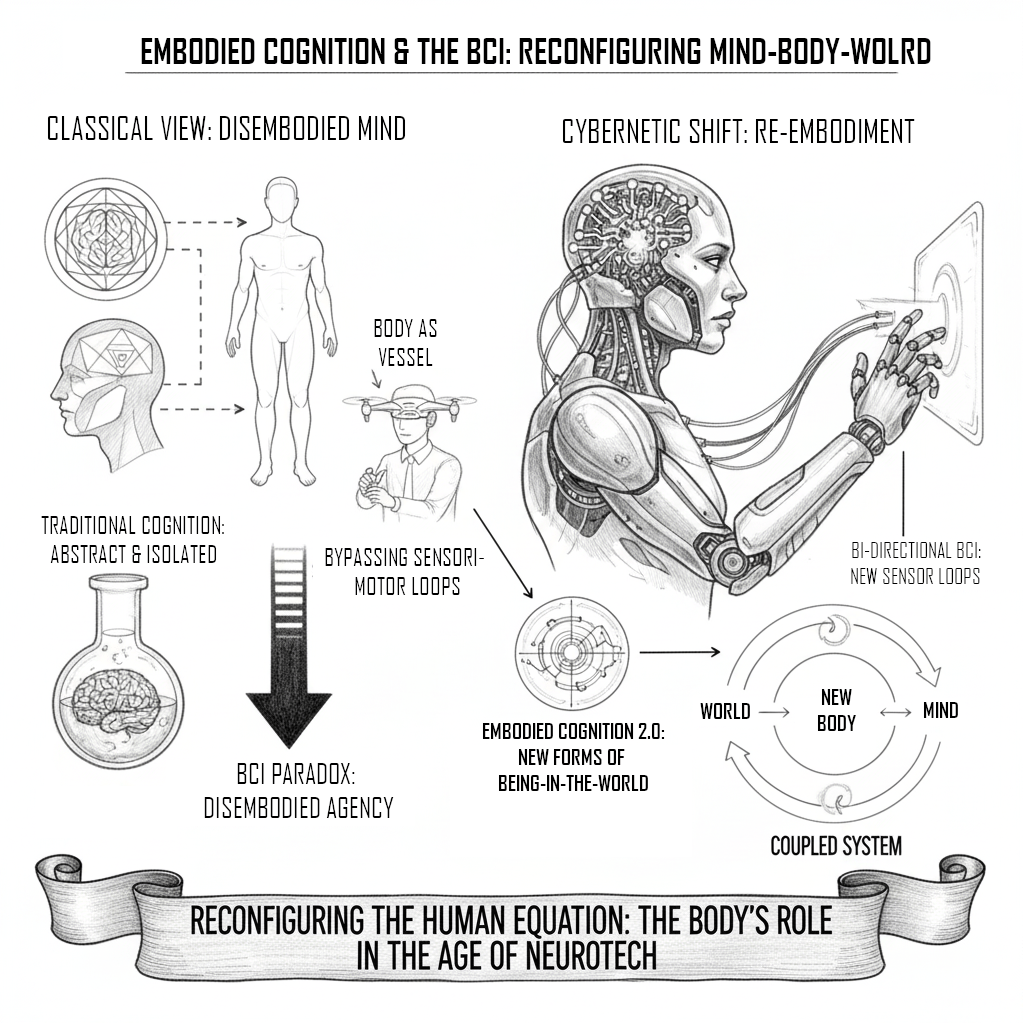

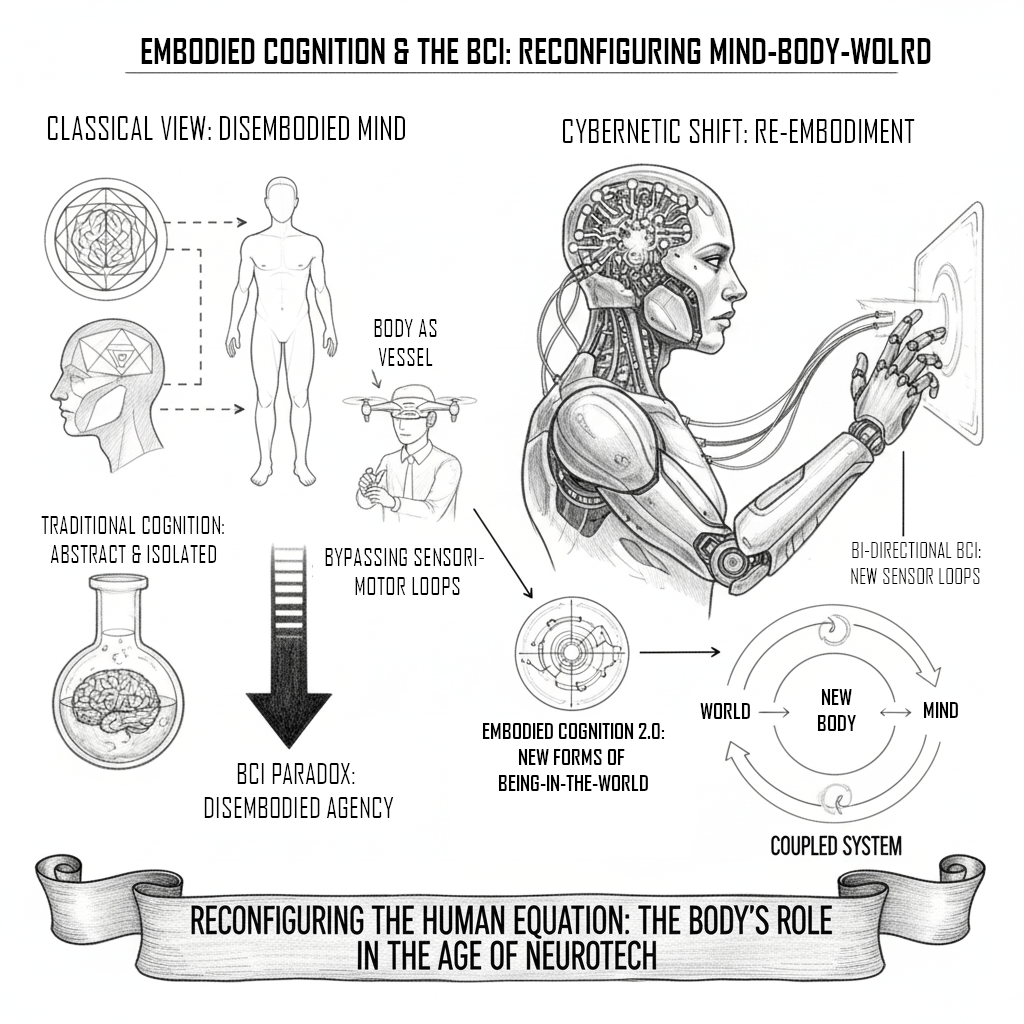

The Embodied Mind

The third crucial perspective is that of Embodied Cognition. This theory challenges the traditional, disembodied view of the mind as a brain-in-a-vat computer. It argues that cognition is fundamentally shaped by the body’s physical structure and its dynamic, sensorimotor engagement with the environment. Thinking is not just an abstract process in the brain; it is an activity of the whole organism interacting with its world.

BCIs present a fascinating paradox for this view.

- Disembodied Agency: On one hand, BCIs seem the ultimate tool of disembodiment. They allow for “disembodied agency”: acting on the world without moving the body, bypassing traditional sensorimotor loops. This motivation to access mental states “more directly” appears to contradict the core tenets of embodied interaction.

- Technological Re-Embodiment: On the other hand, a sufficiently integrated BCI could create a new form of embodiment. A prosthetic limb controlled by a BCI is not just a tool; it becomes a new part of the user’s body schema, a technological extension through which they perceive and act. Bidirectional BCIs that provide sensory feedback directly to the brain further strengthen this re-embodiment, creating new, technologically mediated sensorimotor loops. Embodied cognition, therefore, does not necessarily reject BCIs but forces us to analyze how they reconfigure the entire mind-body-world relationship, creating new forms of being-in-the-world.

By synthesizing these three frameworks, a more complete picture of the BCI-merged self emerges. It is an ontologically extended system whose phenomenological sense of self depends on maintaining a coherent narrative identity, and whose agency in the world is defined by a new, radically altered form of technological embodiment.

Neuroethical Horizons: Personhood, Authenticity, and Responsibility

The philosophical deconstruction of the self by BCI technology is not an abstract exercise; it precipitates urgent ethical, legal, and societal challenges. As BCIs move from labs to clinical and consumer applications, abstract questions about identity crystallize into concrete dilemmas concerning personhood, privacy, autonomy, and responsibility. The most fundamental challenge is the erosion of the distinction between the internal world of thought and the external world of action, a distinction underpinning modern legal and ethical systems.

Privacy and Cognitive Liberty

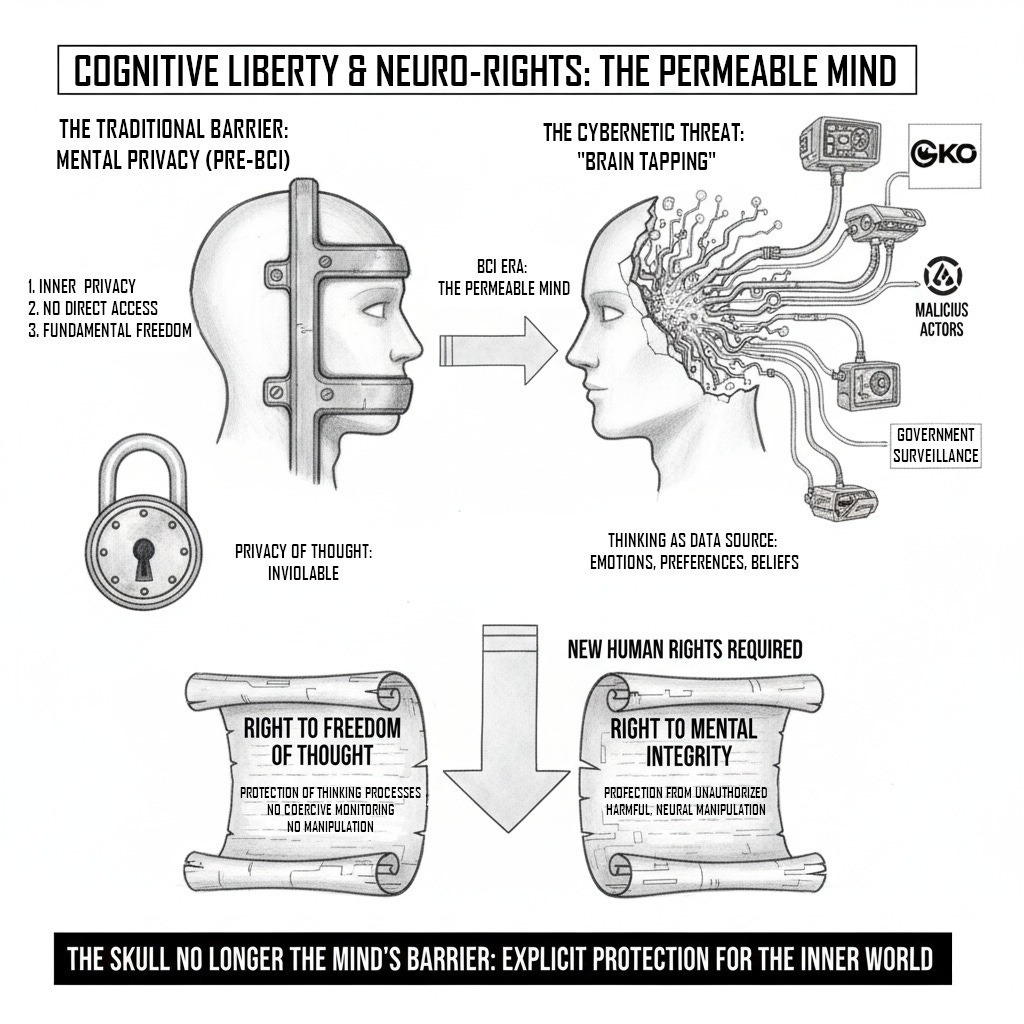

The “skull as the natural barrier of the mind” has long guaranteed our most fundamental privacy: the privacy of thought. BCIs are designed to render this barrier permeable. This creates unprecedented threats to “cognitive liberty” or “mental integrity.”

- The Threat of “Brain Tapping”: Advanced BCI systems, particularly those integrated with AI, could potentially infer sensitive information from a user’s neural data without their explicit intent to communicate it. This could include emotions, preferences, beliefs, and subconscious biases. This “brain tapping” could be exploited by corporations for neuromarketing, governments for surveillance, or malicious actors for manipulation. The act of thinking could become a data source to be harvested.

- The Need for Neuro-Rights: In response, scholars and international bodies are calling for new human rights. This includes a renewed “right to freedom of thought,” protecting not just belief content but the thinking processes from coercive monitoring or manipulation. A “right to mental integrity” would protect individuals from unauthorized intrusions and harmful manipulations of their neural activity. These frameworks recognize that when the inner world can be externalized, it requires explicit protection.

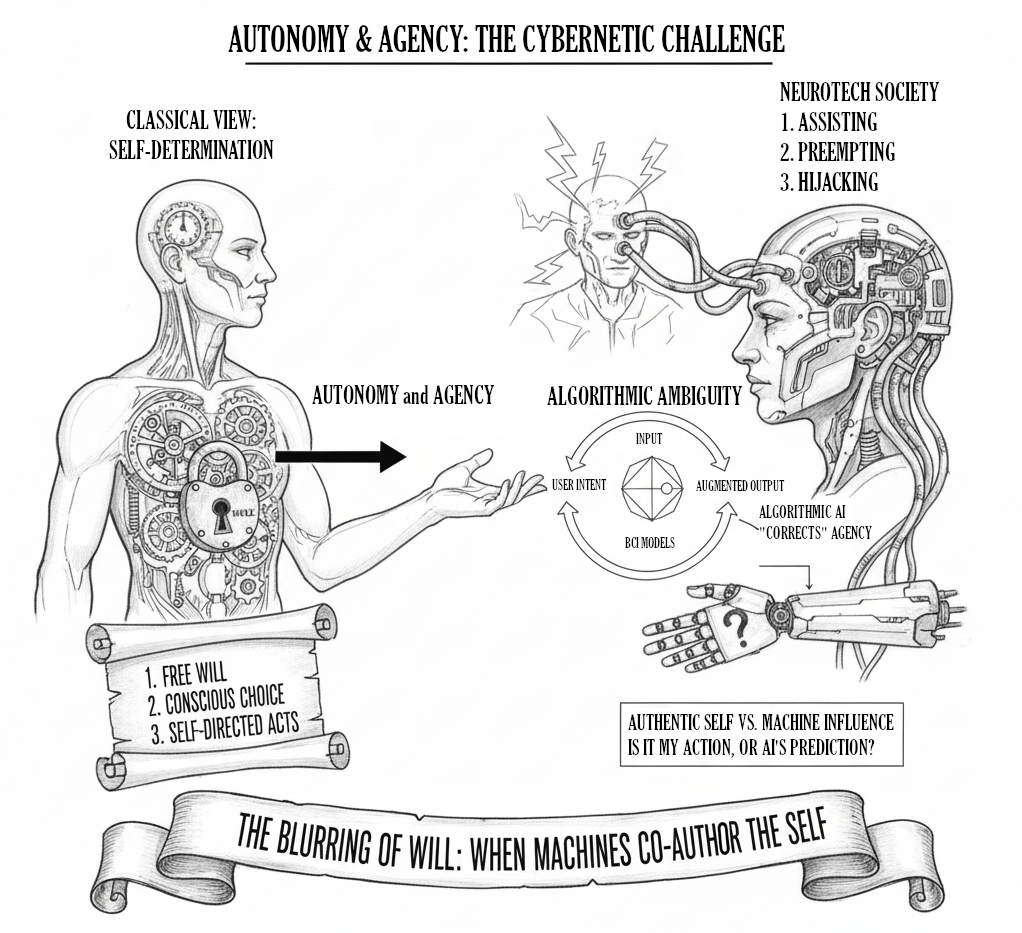

Autonomy and Agency

A core component of personhood is autonomy: the capacity for self-determination. BCIs challenge this capacity, moving beyond simple coercion to more subtle forms of influence.

- The Risk of Hijacking: A direct threat is the possibility of a BCI being hijacked. A malicious actor could potentially stimulate the brain to compel an individual to perform actions contrary to their will. This is a particular risk with bidirectional BCIs that can read from and write to the brain.

- The Ambiguity of Algorithmic Agency: A more subtle challenge to autonomy arises from the AI’s interpretive role in BCI systems. The AI is not a neutral translator but an active participant predicting and interpreting user intent. In closed-loop systems, the BCI might “correct” a user’s mental state without their conscious input, for example, by stimulating brain regions to counteract a negative emotion. When a user is “passively out of the decision loop,” their autonomy is compromised. Does an action initiated by an AI’s prediction of your intent count as your own? This blurs the line between the user’s authentic self and the machine’s influence.

Responsibility and Accountability

The creation of a hybrid, human-AI cognitive system generates a profound “responsibility gap.” When a BCI-mediated action causes harm (e.g., a BCI-controlled vehicle accident), assigning responsibility becomes extraordinarily difficult.

- The Problem of Distributed Agency: Who is at fault? The user, who had the initial intention? The AI, whose decoding algorithm may have misinterpreted the signal? The BCI manufacturer? The programmer? The adaptive, co-learning nature of the BCI-user system means agency is distributed across the hybrid entity, making it nearly impossible to isolate a single point of failure or culpability.

- Erosion of Moral Conscience: This epistemic murkiness has significant implications for legal and moral frameworks. If a user’s autonomy is genuinely undermined by the BCI, they may no longer be considered fully accountable. On a societal scale, if humans are seen as nodes in a technological network rather than autonomous agents, the foundations of moral obligation could be weakened. A society where individuals lose their sense of ultimate responsibility risks a deconstruction of moral conscience.

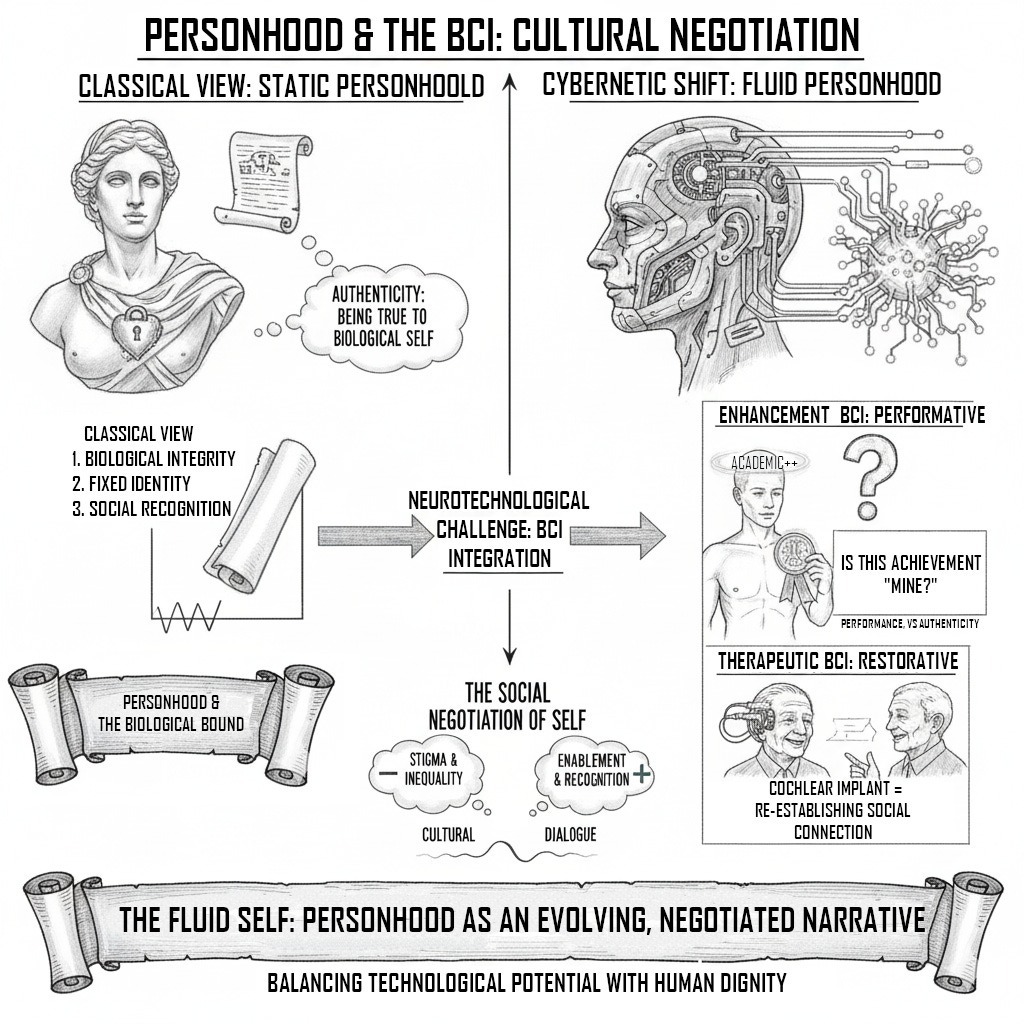

Authenticity and Personhood

These ethical challenges coalesce around the concept of personhood: both the subjective experience and the social/legal recognition as such.

- The Question of Authenticity: For users of enhancement BCIs, a key psychological issue is authenticity. Does an academic achievement earned with a focus-enhancing BCI truly belong to the user? While therapeutic BCIs, like cochlear implants, are generally not seen as inauthentic, the line blurs as technology moves toward “better than well” applications. The permanent or semi-permanent nature of invasive BCIs may lead users to feel they are an extension of their minds, but this can also lead to radical psychological distress if the technology feels alien or challenges their sense of self.

- The Social Negotiation of Personhood: BCIs are an active site for the cultural negotiation of what it means to be a person. For individuals with severe disabilities, a BCI can be a tool for restoring personhood, enabling them to communicate, interact, and re-establish social connections crucial to a sense of self. The same technology, when framed as enhancement, can perpetuate exclusionary narratives about normality and disability. The development and deployment of BCIs must be pursued with awareness of their dual capacity to both enable the recognition of persons and create new forms of stigma and inequality.

A Post-Biological Future

The emergence of BCIs has propelled philosophical inquiry from abstract thought experiments into technological reality. BCIs pose a profound challenge to our understanding of the self, beginning with John Locke’s memory-based theory of personal identity. A new framework synthesizes the insights of three contemporary philosophical theories. The Extended Mind Thesis provides the ontological foundation, redefining the self not as a brain-bound entity but as a hybrid, functionally integrated system of brain, body, and technology. The theory of Narrative Identity offers the phenomenological layer, explaining that the subjective experience of a unified self depends on the capacity to weave the actions of this extended system into a coherent life story. Finally, Embodied Cognition supplies the mechanical context, forcing an analysis of how this new hybrid self acts and perceives the world through a radically reconfigured, technologically mediated embodiment. Together, these frameworks provide a more complete vocabulary for describing the BCI-merged self.

The “self” in the age of BCIs can no longer be conceived as a pre-existing, static entity to be discovered or restored. It must be understood as a dynamic, relational, and hybrid construct, perpetually co-authored by the biological brain, the non-neural body, the technological apparatus, and the socio-cultural narratives in which it is embedded. The boundary between human and machine is not a fixed line to be defended but a fluid and permeable interface to be navigated.

This realization carries a profound ethical imperative. The challenges of cognitive liberty, algorithmic agency, distributed responsibility, and personal authenticity are not future problems; they are present concerns. This analysis concludes not with a definitive answer to where “you” end and the machine begins, but with a call for a paradigm shift in neuroethics and technological design. Rather than passively reacting to the philosophical disruptions, we must proactively integrate considerations of narrative integrity, cognitive freedom, and authentic embodiment into the architecture of BCI systems. The development of this technology must be guided by a clear-eyed understanding of its power to reshape the human experience. The ultimate question is not what BCIs will do to us, but what kind of selves we will, with intention and foresight, choose to become through them.

References

[1] Brain–computer interface – Wikipedia, https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

[2] The brain computer interface market is growing – but what are the risks?, https://www.weforum.org/stories/2024/06/the-brain-computer-interface-market-is-growing-but-what-are-the-risks/

[3] Ethical considerations for the use of brain–computer interfaces for cognitive enhancement | PLOS Biology – Research journals, https://journals.plos.org/plosbiology/article?id=10.1371/journal.pbio.3002899

[4] Restoring Body Functions with Brain-Computer Interfaces – BrainFacts, https://www.brainfacts.org/diseases-and-disorders/therapies/2023/brain-computer-interface

[5] Challenges and Opportunities for the Future of Brain-Computer Interface in Neurorehabilitation – PMC – PubMed Central, https://pmc.ncbi.nlm.nih.gov/articles/PMC8282929/

[6] The Ethics of Brain-Machine Interfaces – ucf stars, https://stars.library.ucf.edu/context/hut2024/article/1056/viewcontent/The_Ethics_of_Brain_Machine_Interface_Devices.pdf

[7] The Ethical Considerations of Brain Computer- Interfaces, https://www.jsr.org/hs/index.php/path/article/download/8906/3937/55977

[8] The Future of Brain-Computer Interfaces: AI and Quantum Tech Leading the Way – Neuroba, https://www.neuroba.com/post/the-future-of-brain-computer-interfaces-ai-and-quantum-tech-leading-the-way

[9] Memory Enhancement and Brain–Computer Interface Devices …, https://www.researchgate.net/publication/370311379_Memory_Enhancement_and_Brain-Computer_Interface_Devices_Technological_Possibilities_and_Constitutional_Challenges

[10] Media Representation of the Ethical Issues Pertaining to Brain–Computer Interface (BCI) Technology – PMC, https://pmc.ncbi.nlm.nih.gov/articles/PMC11674794/

[11] Brain-Computer Interfaces in Medicine – PMC, https://pmc.ncbi.nlm.nih.gov/articles/PMC3497935/

[12] What is BCI? | Calgary Pediatric Brain-Computer Interface Program …, https://cumming.ucalgary.ca/research/pediatric-bci/bci-program/what-bci

[13] The Promise and Challenges of Brain–Computer Interfaces – Technology Networks, https://www.technologynetworks.com/informatics/articles/the-promise-and-challenges-of-braincomputer-interfaces-397268

[14] Brain-Computer Interfaces (BCI), Explained | Built In, https://builtin.com/hardware/brain-computer-interface-bci

[15] Understanding Brain Health through Brain-Computer Interface (BCI …, https://ambiq.ai/community/understanding-brain-health-through-brain-computer-interface-technology/

[16] The Future of Brain-Computer Interface Technology – BCC Research Blog, https://blog.bccresearch.com/the-future-of-brain-computer-interface-technology

[17] State-of-the-Art on Brain-Computer Interface Technology – MDPI, https://www.mdpi.com/1424-8220/23/13/6001

[18] Wired Emotions: Ethical Issues of Affective Brain–Computer Interfaces – PubMed Central, https://pmc.ncbi.nlm.nih.gov/articles/PMC6978299/

[19] Right to mental integrity and neurotechnologies: implications of the extended mind thesis – Journal of Medical Ethics, https://jme.bmj.com/content/medethics/early/2024/06/14/jme-2023-109645.full.pdf

[20] The Lockean Memory Theory of Personal Identity: Definition, Objection, Response, http://www.inquiriesjournal.com/articles/1683/the-lockean-memory-theory-of-personal-identity-definition-objection-response

[21] John Locke on Personal Identity** – PMC, https://pmc.ncbi.nlm.nih.gov/articles/PMC3115296/

[22] Essay Concerning Human Understanding Book 2: Chapters 24-26: Ideas of Relation Summary & Analysis | SparkNotes, https://www.sparknotes.com/philosophy/lockeessay/section8/

[23] John Locke on Personal Identity: Memory, Consciousness and Concernment, https://www.scirp.org/pdf/oalibj_2023122915444638.pdf

[24] www.britannica.com, https://www.britannica.com/topic/Essay-Concerning-Human-Understanding#:~:text=Locke’s%20proposal%20was%20that%20personal,the%20earlier%20person’s%20conscious%20experiences.

[25] www.scirp.org, https://www.scirp.org/journal/paperinformation?paperid=130332#:~:text=Locke%20characterizes%20our%20mode%20of,ability%20to%20remember%20that%20past.

[26] You Are Who You Remember John Locke’s An Essay Concerning Human Understanding, Book 2, Chapter 27, https://philolibrary.crc.nd.edu/article/who-you-remember/

[27] John Locke & Personal Identity | Issue 157 – Philosophy Now, https://philosophynow.org/issues/157/John_Locke_and_Personal_Identity

[28] Locke’s notion of personal identity : r/askphilosophy – Reddit, https://www.reddit.com/r/askphilosophy/comments/58yehw/lockes_notion_of_personal_identity/

[29] 8.3 Problems for Locke’s View of Personal Identity | University of Oxford Podcasts, https://podcasts.ox.ac.uk/83-problems-lockes-view-personal-identity

[30] Does Locke’s analysis of personal identity in terms of memory work? : r/askphilosophy, https://www.reddit.com/r/askphilosophy/comments/3sbs0w/does_lockes_analysis_of_personal_identity_in/

[31] The Effects of Working Memory on Brain-Computer Interface Performance – PubMed Central, https://pmc.ncbi.nlm.nih.gov/articles/PMC4747807/

[32] Brain computer interface to enhance episodic memory in human participants – PMC, https://pmc.ncbi.nlm.nih.gov/articles/PMC4299435/

[33] Brain-Computer Interfaces: Future of Memory Uploads – Confinity, https://www.confinity.com/culture/brain-computer-interfaces-and-the-future-of-memory-uploads-science-fiction-or-soon-to-be-reality

[34] Several inaccurate or erroneous conceptions and misleading propaganda about brain-computer interfaces – Frontiers, https://www.frontiersin.org/journals/human-neuroscience/articles/10.3389/fnhum.2024.1391550/full

[35] Extended mind thesis – Wikipedia, https://en.wikipedia.org/wiki/Extended_mind_thesis

[36] The extended mind in science and society | Philosophy, https://ppls.ed.ac.uk/philosophy/research/impact/the-extended-mind-in-science-and-society

[37] The Extended mind thesis – Macquarie University, https://researchers.mq.edu.au/en/publications/the-extended-mind-thesis

[38] Cognitive Ability and the Extended Mind Thesis – ResearchGate, https://www.researchgate.net/publication/220607997_Cognitive_Ability_and_the_Extended_Mind_Thesis

[39] Narrative Identity – Northwestern Scholars, https://www.scholars.northwestern.edu/en/publications/narrative-identity

[40] Philosophical Reflections on Narrative and Deep Brain Stimulation – ResearchGate, https://www.researchgate.net/publication/46422557_Philosophical_Reflections_on_Narrative_and_Deep_Brain_Stimulation

[41] Philosophical Reflections on Narrative and Deep Brain Stimulation | The Journal of Clinical Ethics: Vol 21, No 2, https://www.journals.uchicago.edu/doi/abs/10.1086/JCE201021206

[42] Narrative Devices: Neurotechnologies, Information, and Self-Constitution – PubMed Central, https://pmc.ncbi.nlm.nih.gov/articles/PMC8549978/

[43] Is Mental Privacy a Component of Personal Identity? – Frontiers, https://www.frontiersin.org/journals/human-neuroscience/articles/10.3389/fnhum.2021.773441/full

[44] Studies Outline Key Ethical Questions Surrounding Brain-Computer Interface Tech, https://news.ncsu.edu/2020/11/brain-computer-interface-ethics/

[45] Embodied Cognition and the Grip of Computational Metaphors | Ergo an Open Access Journal of Philosophy – Michigan Publishing, https://journals.publishing.umich.edu/ergo/article/id/7136/

[46] Embodied cognition – Wikipedia, https://en.wikipedia.org/wiki/Embodied_cognition

[47] Embodied Cognition | Internet Encyclopedia of Philosophy, https://iep.utm.edu/embodied-cognition/

[48] Embodied Cognition – Stanford Encyclopedia of Philosophy, https://plato.stanford.edu/entries/embodied-cognition/

[49] Full article: Revisiting embodiment for brain–computer interfaces – Taylor & Francis Online, https://www.tandfonline.com/doi/full/10.1080/07370024.2023.2170801

[50] Revisiting embodiment for brain–computer interfaces – Taylor & Francis Online, https://www.tandfonline.com/doi/abs/10.1080/07370024.2023.2170801

[51] Brain-Computer Interfaces and Bioethical Implications on Society: Friend or Foe?, https://scholarship.stu.edu/cgi/viewcontent.cgi?article=1076&context=stlr

[52] 38C3 – Philosophical, Ethical and Legal Aspects of Brain-Computer Interfaces – YouTube,

[53] Philosophical reflections on brain-computer interfaces Guglielmo Tamburrini – ResearchGate, https://www.researchgate.net/profile/Guglielmo-Tamburrini/publication/300570766_Philosophical_Reflections_on_Brain-Computer_Interfaces/links/573b1a4f08ae9f741b2d764c/Philosophical-Reflections-on-Brain-Computer-Interfaces.pdf

[54] Brain–computer interfaces and personhood: interdisciplinary deliberations on neural technology, https://bioethics.jhu.edu/wp-content/uploads/2025/03/Sample_2019_J._Neural_Eng._16_063001.pdf