Disclaimer: A core part of this work is educate and promote transparency in use of AI. Gemini has been used throughout the creative process of generating this article.

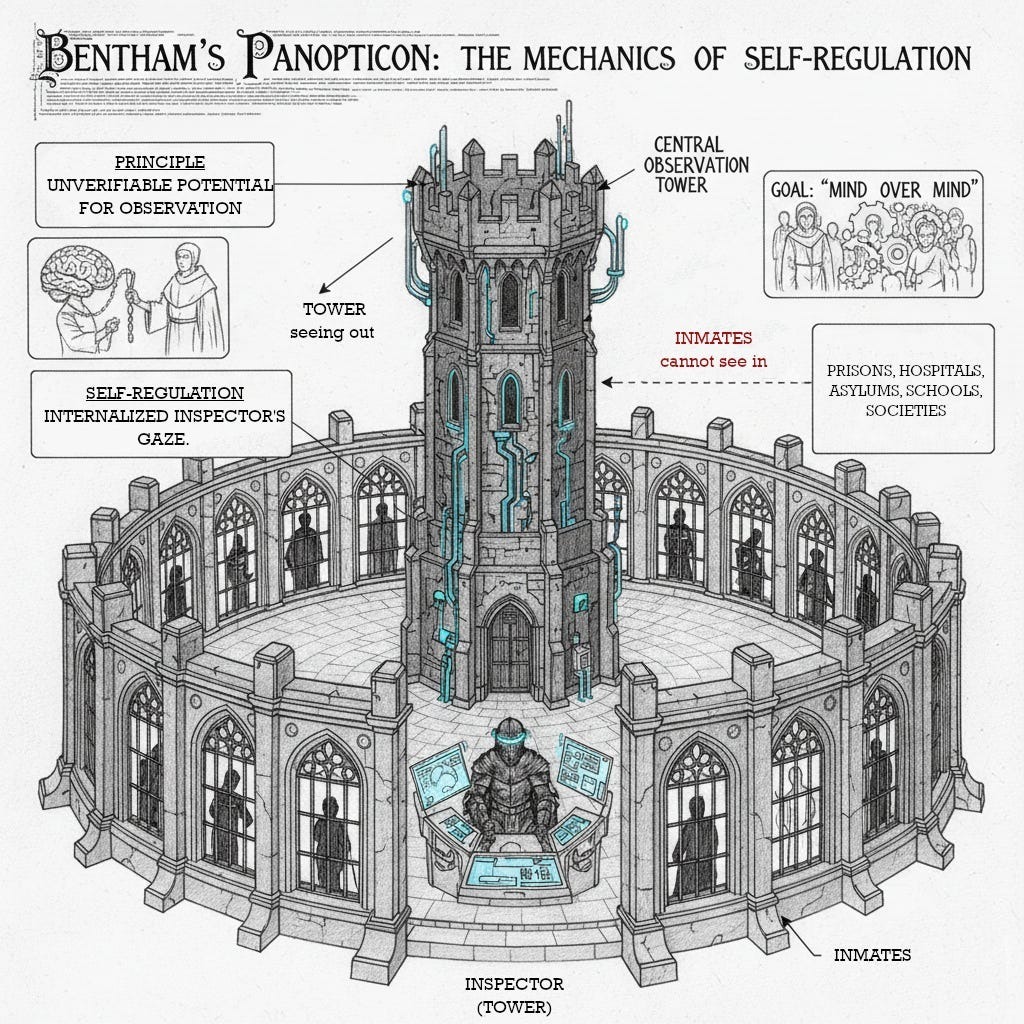

The contemporary technological landscape is defined by the promise of “ambient intelligence”, a world where environments are seamlessly responsive to our needs. This vision manifests in smart homes, wearable health devices, and connected cities or the Internet of Evertyhing. This convenience is built upon a paradox: its seamlessness is predicated on constant, unobtrusive, and pervasive data collection. The sensors and networks that make our world “smart” create an environment of perpetual observation, capturing granular details of daily human life. The ecosystem of ambient devices constitutes a new, more potent form of control, with the ability to predict and modify behavior for commercial and governmental ends.

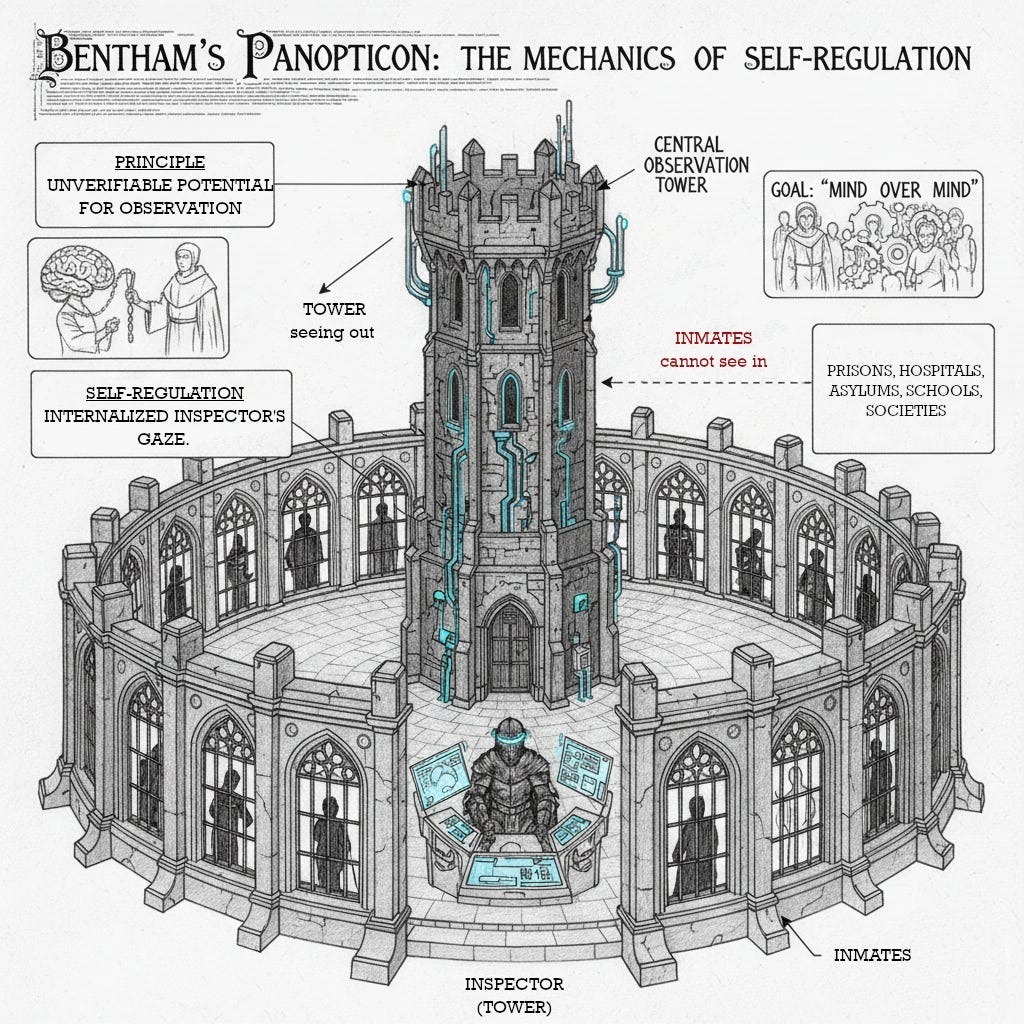

Bentham’s Panopticon: Self-Regulation

In the late 18th century, Jeremy Bentham conceived the Panopticon, a prison design for inmate reformation through constant surveillance. The architecture is key: a central observation tower stands within a circular building of individual cells. Each cell is backlit, allowing an inspector in the tower to see a silhouette of the inmate, while inmates cannot see into the darkened tower. This establishes an asymmetry of vision, a “see/being seen dyad” where the inspector is an invisible, all-seeing presence, and the inmate is perpetually visible.

The psychological principle is not that the inmate is always watched, but that they can never know when they are being watched. This state of conscious and permanent visibility, this unverifiable potential for observation, compels the inmate to regulate their own behavior, effectively internalizing the inspector’s gaze. Bentham’s goal was a “new mode of obtaining mind over mind,” a control rooted in psychological manipulation, not overt physical coercion. While conceived for prisons, Bentham saw this as a generalizable model for factories, asylums, hospitals, and schools: any institution where efficient ordering of human masses was desired.

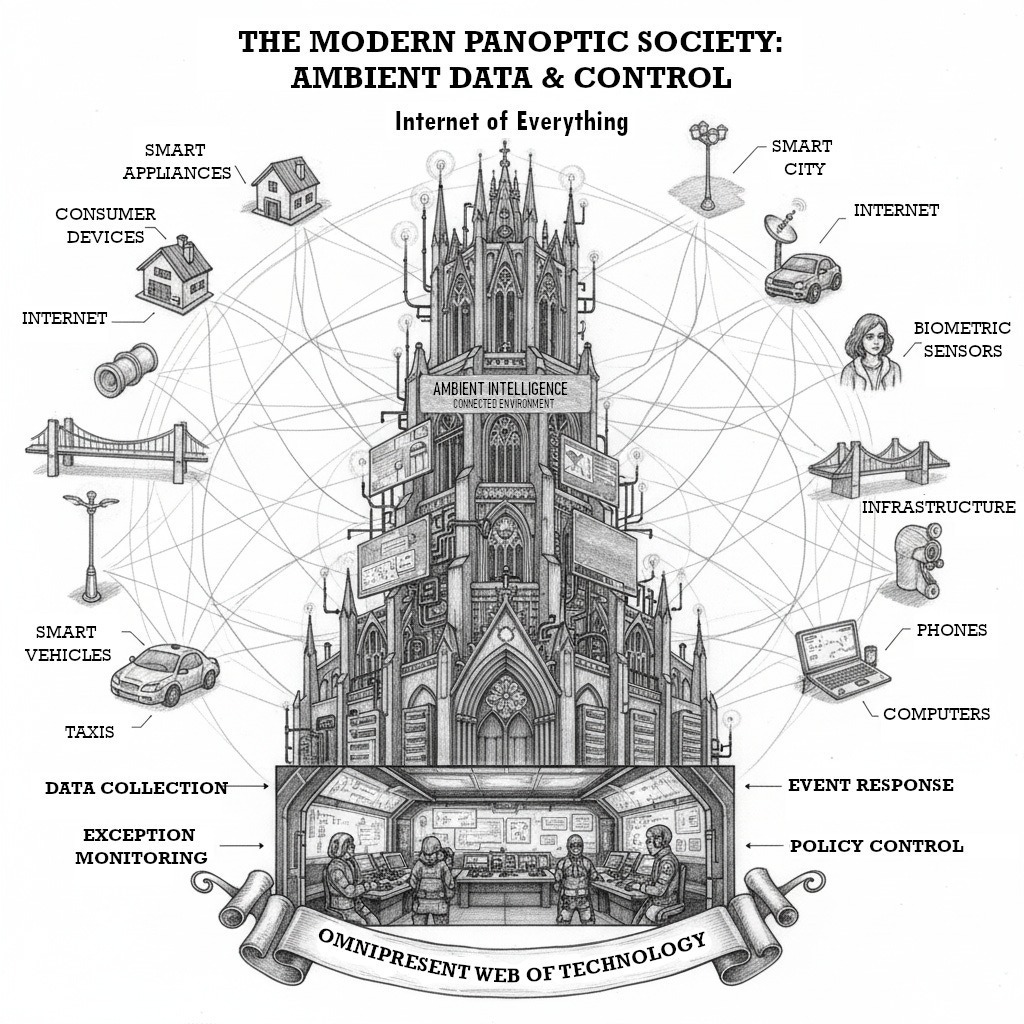

Foucault’s Panopticism: Disciplinary Power

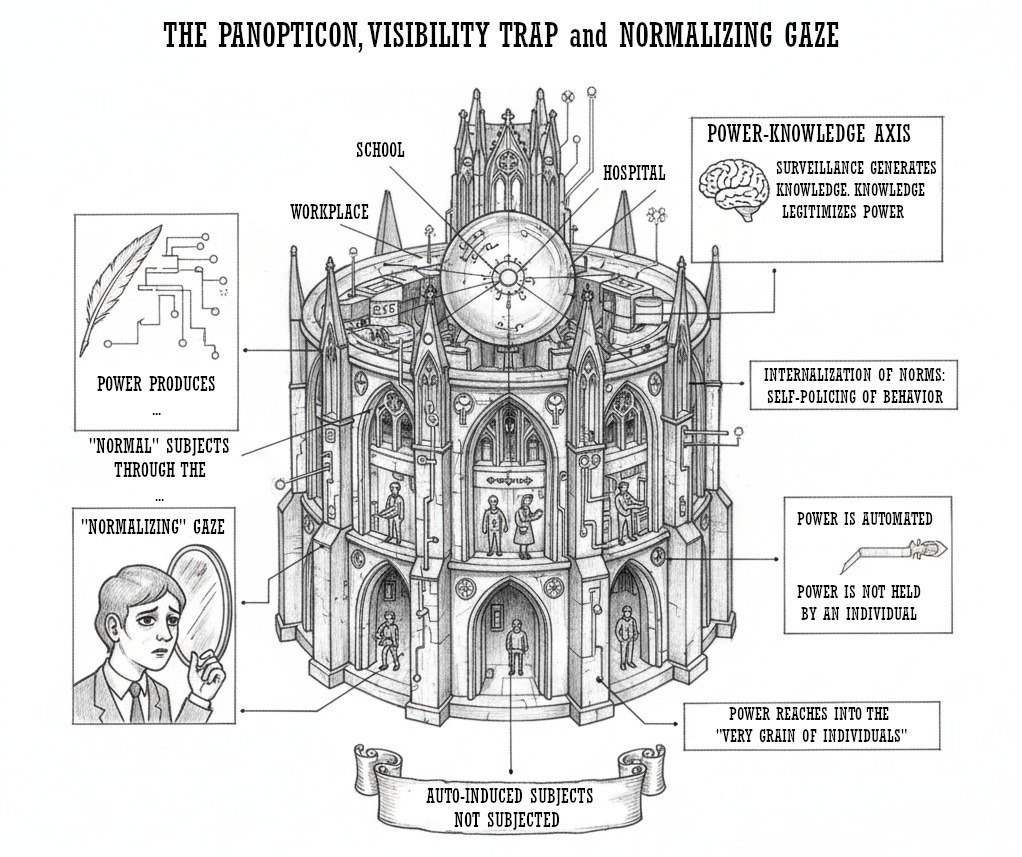

Two centuries later, in Discipline and Punish, Michel Foucault elevated the Panopticon from architectural curiosity to a powerful social metaphor, a “diagram of power reduced to its ideal form”. For Foucault, the Panopticon represented the operating principle of modern “disciplinary societies,” where power is exercised not through spectacular, repressive force, but through subtle, pervasive, and productive mechanisms of control.

Foucault argued this power is not primarily negative; it is productive: it produces reality, rituals of truth, and “normal” subjects. Through constant observation in institutions like schools, hospitals, and workplaces, individuals internalize societal norms. This “normalizing gaze” compels them to police their own thoughts and behaviors to conform to what is deemed acceptable. Visibility becomes a “trap” that allows others to order, judge, and categorize us. This process is sustained by the link between power and knowledge. Panoptic surveillance generates vast knowledge about its subjects (school reports, medical records, productivity metrics) which refines and legitimizes the exercise of power over them.

A crucial element of Foucault’s analysis is that this panoptic power is automated and disindividualized. It is not dependent on the person exercising it; power resides in the arrangement. The subject, “becomes the principle of his own subjection”. Power is not “held” by a sovereign but “circulates” through a diffuse network, reaching into the “very grain of individuals”.

The conceptual journey from Bentham’s prison to Foucault’s disciplinary society marks an evolution in the logic of power. Bentham’s model was reformative, correcting past behavior. Foucault’s analysis reveals a shift toward a formative or disciplinary logic, shaping the population to prevent deviance. Ambient data collection enables a third stage: preemptive control. Power no longer waits for a crime or institutional discipline. By analyzing vast datasets, the system can predict who is likely to deviate (default on a loan, develop a disease, commit a crime) and intervene before the event. This moves the locus of control from the past (punishment) and present (discipline) to the future (preemption), a fundamental transformation in surveillance.

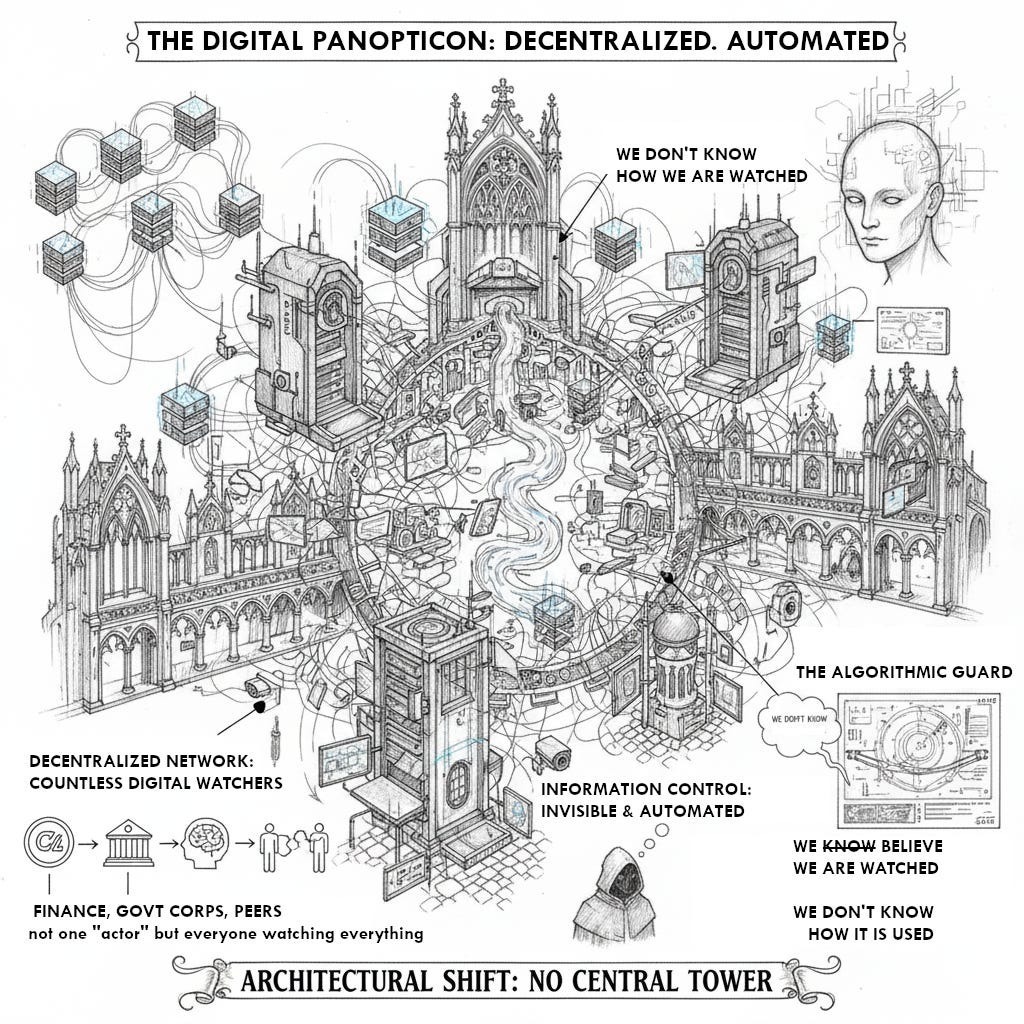

The Digital Panopticon: Reimagining Surveillance

The technological foundation of the modern panoptic society is the Internet of Things (IoT), a network of physical objects with sensors, software, and connectivity to collect and exchange data. This network is the engine of Ambient Intelligence (AmI), creating context-aware, personalized, and adaptive environments that respond without explicit commands. This is achieved through a ubiquitous web of technologies: sensors (motion, temperature, sound, biometric), actuators, and artificial intelligence (AI) and machine learning (ML) algorithms that process data to identify patterns and make decisions. The scope of this data collection is immense, from consumer devices to civic infrastructure.

The IoT as a Decentralized Panopticon

This architecture functions as a digital panopticon, but with critical structural differences. The centralized tower is replaced by a decentralized network of countless digital “watchers”. The observer is not a human guard but a diffuse assemblage of corporate platforms, government agencies, AI algorithms, and peers. The control architecture is informational, woven invisibly into the environment.

The “guard” in this digital panopticon is often an algorithm: invisible, inscrutable, and automated. This deepens the power asymmetry. We are not merely uncertain if we are watched; we are ignorant of how our data is collected, analyzed, and used for decisions about us. This perfects the disindividualized power Foucault described, where the system operates automatically.

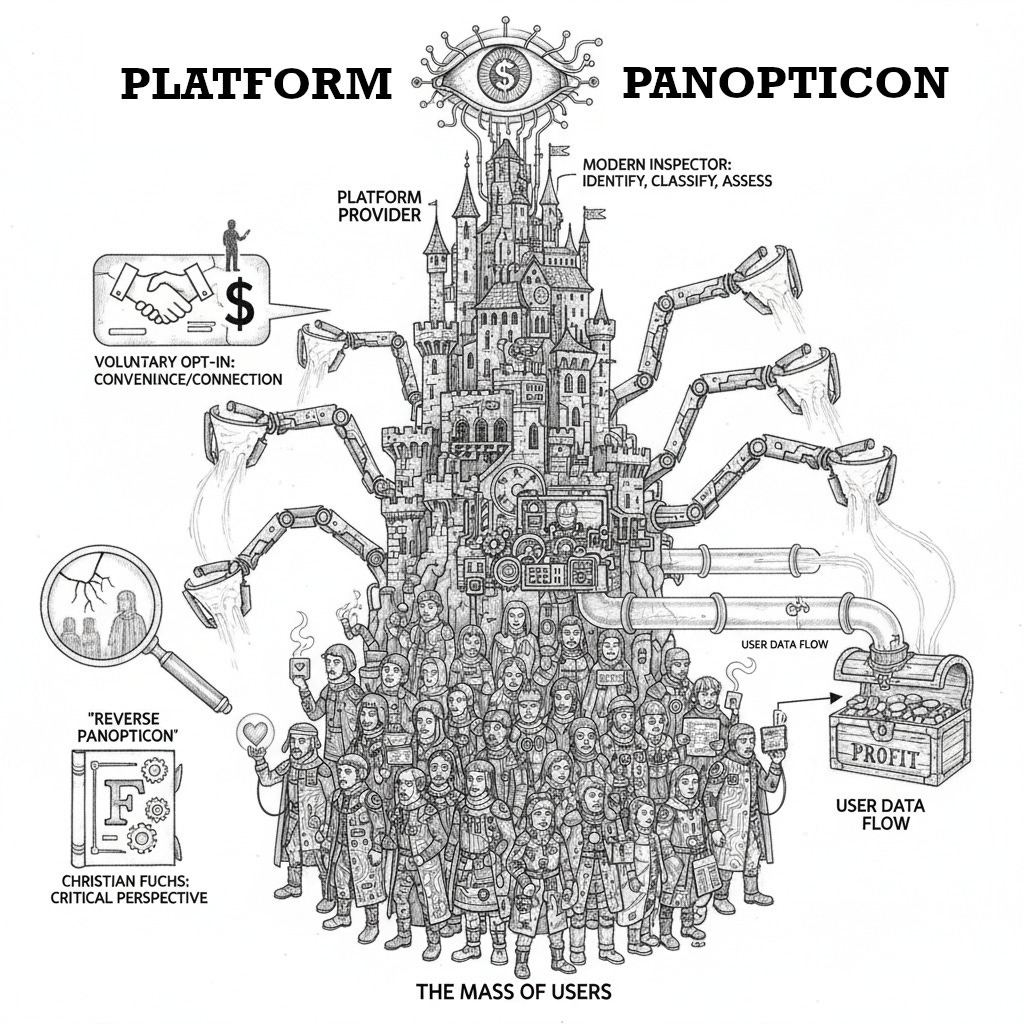

The classical Panopticon metaphor has limitations. The rise of social media introduces the “Synopticon,” sociologist Thomas Mathiesen’s term for the many watching the few. In this model (e.g., followers observing an influencer), individuals voluntarily engage in self-exposure, actively seeking visibility. This coexists with panopticism.

Unlike Bentham’s prisoners, users of connected devices and social media often voluntarily opt into surveillance, trading privacy for convenience or connection. While some analyses framed social media as a “reverse panopticon” where citizens watched the powerful, this view is insufficient. A more critical perspective, from scholars like Christian Fuchs, argues the true panoptic relationship is between the mass of users and the platform. The platform is the modern inspector, continuously identifying, classifying, and assessing users to profit from their data.

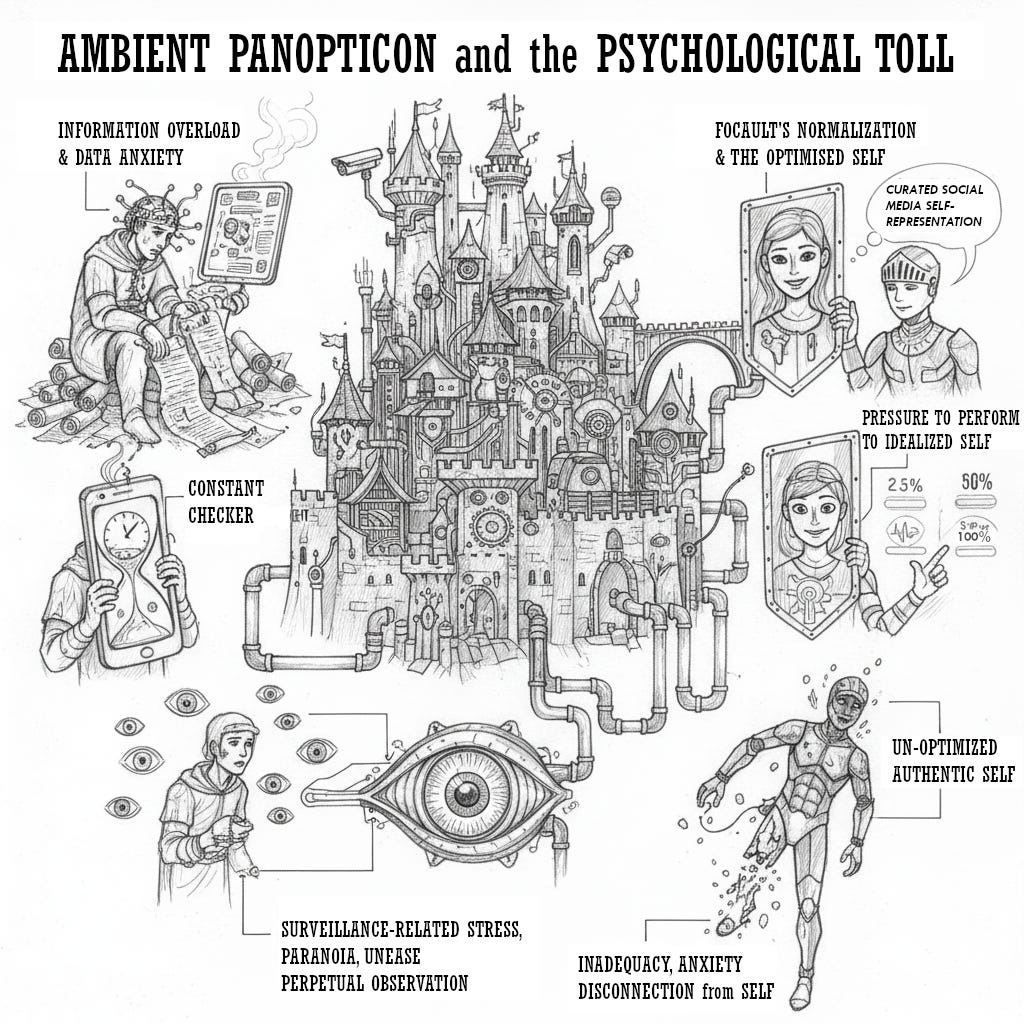

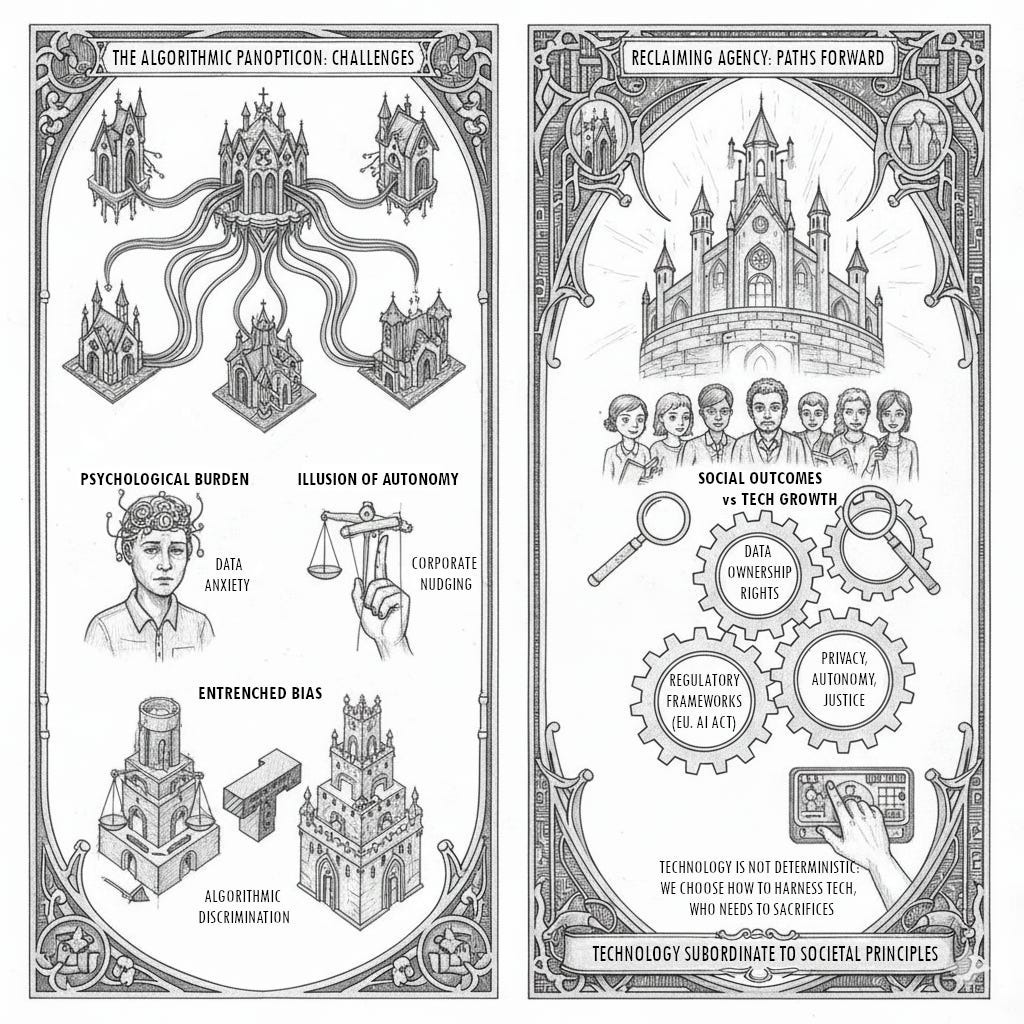

The Internalized Observer: Psychological and Behavioral Consequences

Life within the Ambient Panopticon exerts a significant psychological toll. The data volume contributes to information overload (IO) and “data anxiety,” a feeling of being overwhelmed. This is compounded by the “constant checker” phenomenon, where device attachment is associated with higher stress. The uncanny sense of perpetual observation, even by non-human algorithms, can foster surveillance-related stress, paranoia, and unease.

This constant monitoring extends Foucault’s normalization into every facet of life. Aware their actions (driving habits, energy use, health metrics) are tracked and judged, individuals feel pressure to perform an idealized, acceptable version of themselves. This is visible in curated social media self-presentation, where users become their own “Big Brother,” filtering their lives. This pressure can lead to inadequacy, anxiety, and disconnection from one’s authentic, un-optimized self.

The awareness of being monitored creates a “chilling effect,” leading to self-censorship. Fearing digital activities might be misinterpreted, flagged, or used against them, individuals may avoid researching sensitive topics, refrain from dissenting opinions, or hesitate to associate with certain groups. This corrodes democratic discourse, intellectual curiosity, and academic freedom. The IoT is insidious, extending this chilling effect from the public internet into the private home. A casual conversation overheard by a smart speaker becomes a permanent data point, blurring essential boundaries for uninhibited thought and intimacy.

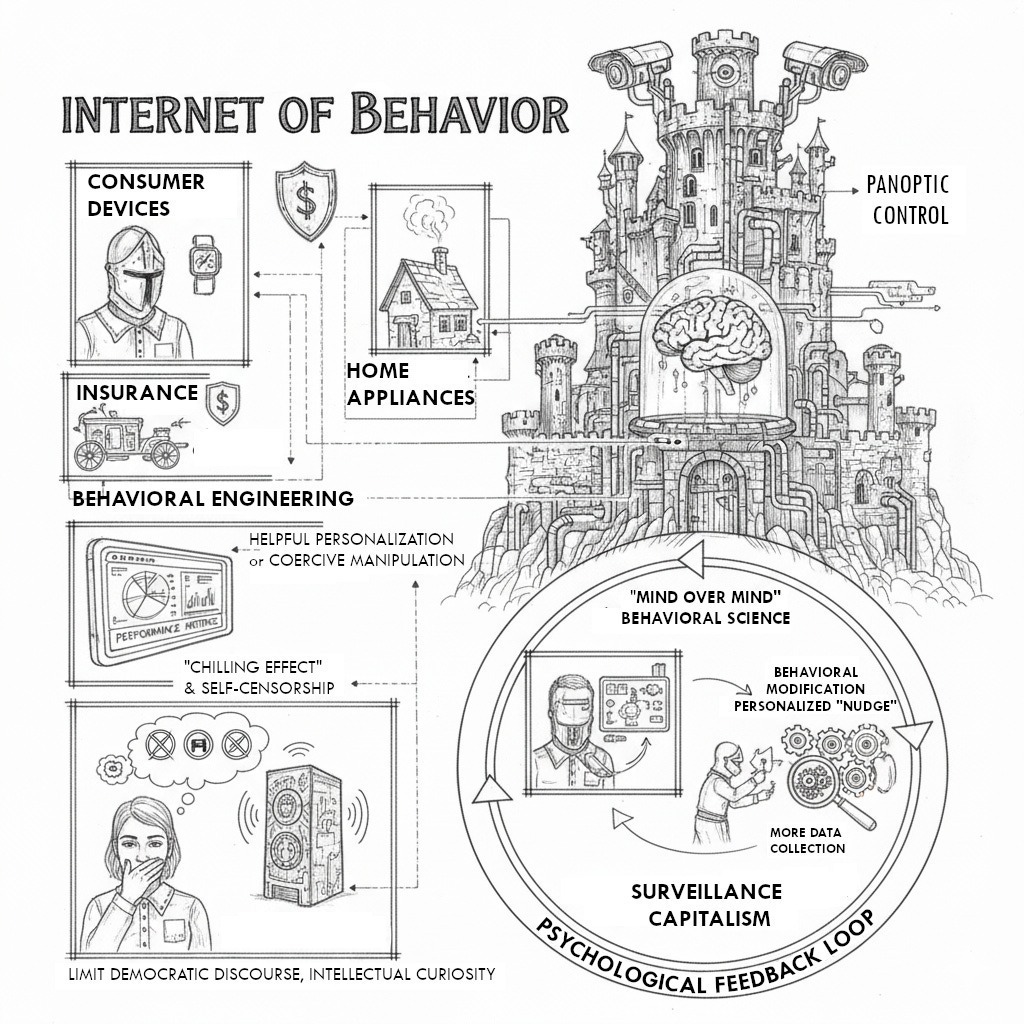

The Internet of Behavior (IoB): From Monitoring to Modification

The ultimate expression of panoptic control is the Internet of Behavior (IoB). The IoB extends the IoT, leveraging collected data with behavioral science principles to actively influence human actions. It operationalizes Bentham’s goal of “mind over mind.” IoB mechanisms often appear benevolent: a smartwatch “nudging” a user to be active, an insurance app offering discounts for safer driving, or a smart home suggesting energy conservation.

These nudges constitute subtle but powerful behavioral engineering. The IoB operates on a fine line between helpful personalization and coercive manipulation, raising ethical questions about autonomy and consent. In an IoB-shaped world, the freedom to make “wrong,” “inefficient,” or “suboptimal” choices according to an algorithmic standard is diminished.

The psychological stress from panoptic surveillance and the IoB’s behavioral modification are symbiotically linked. The system creates anxiety, then offers the “solution” as a corrective nudge. Data from wearables generates performance metrics (sleep scores, step counts). Awareness of these metrics, and the failure to meet them, induces “data anxiety” and a desire for self-optimization. The individual feels constant pressure to conform to a data-driven norm. The IoB system, having identified this anxiety, provides a personalized nudge (a product, an alert, tailored content). The user, already self-scrutinizing, is primed to accept this intervention to alleviate anxiety. This creates a self-perpetuating feedback loop: surveillance generates anxiety, increasing receptiveness to behavioral modification, justifying more data collection to refine the nudges. This cycle is the engine of surveillance capitalism at the psychological level.

Mechanisms of Influence: Corporate Power

In the 21st-century economy, personal data from the ambient environment is a primary commodity. Corporations use this data not just to improve products, but to create and sell “prediction products” (forecasts of human behavior) to advertisers, insurers, and other businesses. This “surveillance capitalism” thrives on the hyper-personalization and predictive targeting enabled by IoT data. Examples include in-store beacons tracking customers for real-time discounts, GPS data triggering nearby ads, and smart appliance data predicting household needs. This evolves traditional demographic targeting into a more invasive, real-time behavioral and predictive model.

The ultimate goal of corporate surveillance is not just predicting consumer behavior but actively shaping it, guaranteeing commercial outcomes. By analyzing granular IoT data, companies can identify psychological triggers and deliver persuasive marketing at opportune moments, nudging consumers toward purchases.

The smart home ecosystem (e.g., Amazon Echo, Google Home) is a powerful case study. These devices act as central data-collection hubs, passively monitoring conversations, routines, media consumption, and appliance interactions. The data builds detailed user profiles informing targeted advertising, personalized recommendations, and cross-promotion across an interconnected ecosystem.

This pervasive, IoT-driven marketing can constrain consumer choice. By creating a personalized, frictionless environment of predictive suggestions, the system can lock consumers into a specific corporate ecosystem, eroding the discovery of alternatives. This creates a “behavioral monopoly.” IoT systems learn user preferences with extreme granularity. They then use this data for “helpful” automations, like a smart coffee maker re-ordering a specific brand. This automation creates friction for any alternative choice. To buy a different brand, the user must consciously intervene and fight the seamless flow. Over time, convenience fosters dependency. The consumer transitions from an active agent to a passive recipient managed by the platform. This grants the platform provider immense power; they control the marketing channel, purchase point, and the behavioral loop itself. This is a monopoly on the consumer’s decision-making process.

The Algorithmic Beat: Governmental Surveillance and Predictive Control

Governments deploy IoT technologies to create “smart cities,” framed as improving public safety, efficiency, and quality of life. This involves massive public surveillance infrastructure: facial recognition-enhanced CCTV, sensor-embedded smart streetlights, and automated traffic management. This infrastructure provides the state with an unprecedented capacity for mass surveillance. Modern IT solutions amplify this power by enabling seamless data sharing between agencies, allowing aggregation of vast, interconnected citizen databases for uses beyond their original intent.

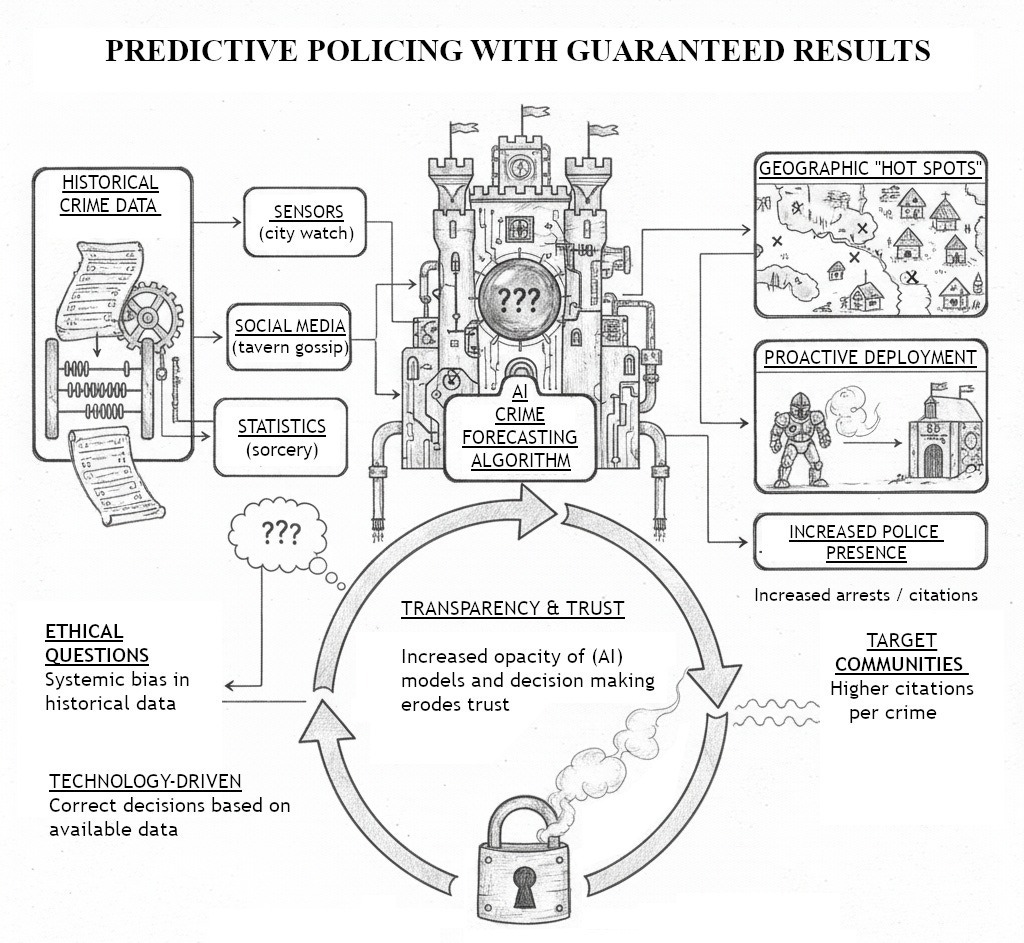

One of the most controversial applications is “predictive policing.” This uses AI algorithms to analyze historical crime data, often combined with IoT sensor data and social media, to forecast geographic “hot spots” where crime is likely. Law enforcement can then proactively deploy resources to deter crime.

This practice is fraught with ethical problems, as highlighted by organizations like the ACLU and NAACP. The historical crime data training these algorithms is not neutral. It reflects past policing practices, which include systemic biases disproportionately targeting minority and low-income communities. When this biased data is fed into a machine learning model, the algorithm learns to associate these communities with higher crime risk, creating a dangerous, self-perpetuating feedback loop. The algorithm directs police to these neighborhoods more frequently. This heightened presence leads to more citations and arrests, which are fed back as new data, “confirming” the algorithm’s biased prediction and entrenching the cycle.

This system creates technologically-justified discrimination. Compounding the issue is a severe lack of transparency. The algorithms are often proprietary “black boxes,” making it nearly impossible to scrutinize the data sources and logic. This opacity undermines due process, erodes public trust, and shields biased systems from oversight.

The proliferation of predictive policing threatens to alter legal standards and erode constitutional protections. The Fourth Amendment protects against unreasonable searches and seizures, requiring “reasonable suspicion” based on “specific and articulate facts.” Predictive policing introduces “algorithmic suspicion.” When an algorithm designates a “hot spot,” it primes officers to view the area and its inhabitants with pre-existing suspicion. This can lower the evidentiary threshold for what an officer considers suspicious, leading to stops based not on observable facts, but on an opaque algorithm’s probabilistic output. A computer-driven hunch, cloaked in data’s authority, risks becoming a substitute for individualized suspicion. This process launders historical human bias through a veneer of technological objectivity, making it difficult to challenge police encounters and altering the citizen-state relationship.

The Panoptic Sort: Social Stratification

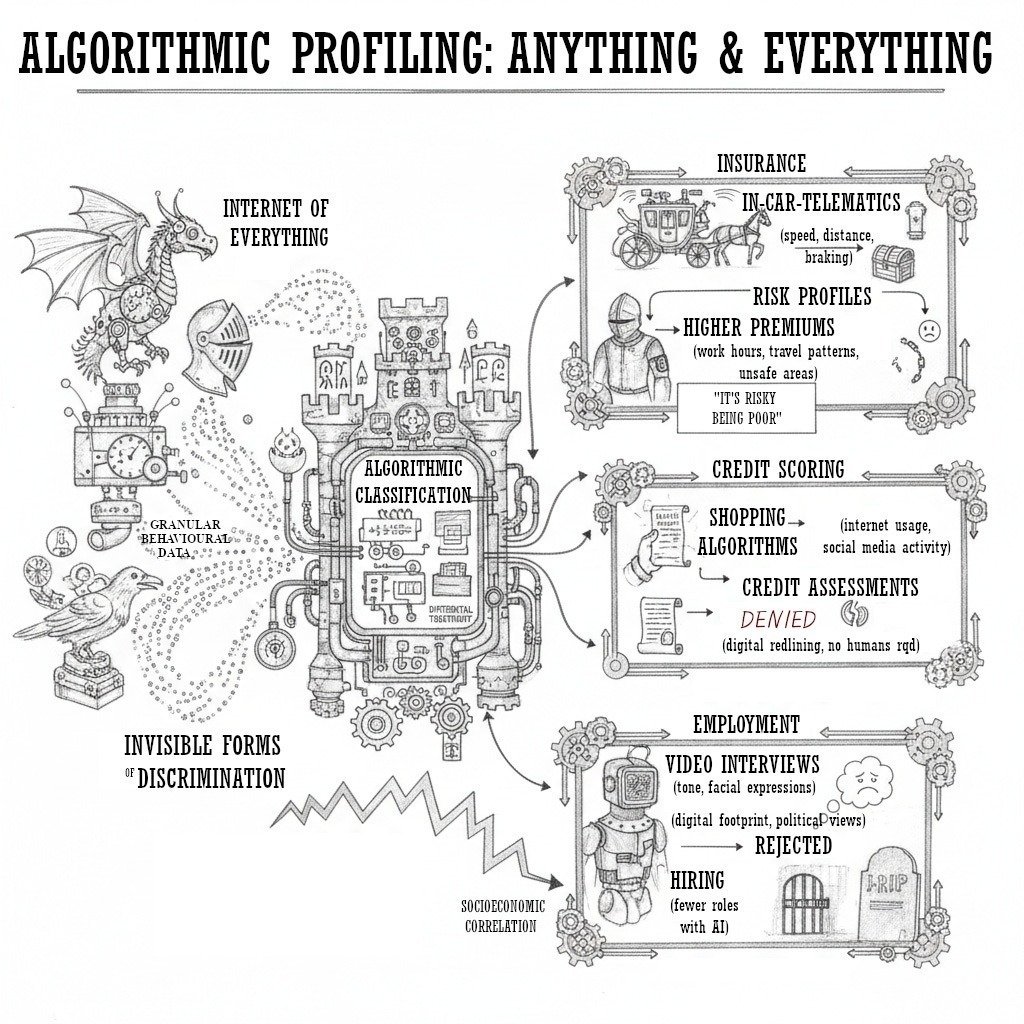

The immense volume of granular behavioral data from the IoT fuels “social sorting,” where individuals are algorithmically classified into groups to receive differential treatment in opportunities, services, and costs. This automated stratification creates new, often invisible forms of discrimination impacting life chances.

This data-driven discrimination is manifesting across critical sectors:

- Insurance: The industry adopts “behavior-based” models using IoT data. Data from in-car telematics (speed, braking) and fitness trackers (activity, sleep) create individualized risk profiles. While framed as rewarding low-risk behavior, this can lead to higher premiums or denial of coverage for individuals with pre-existing conditions, those working night shifts, or those in “unsafe” areas: factors often proxying for socioeconomic status or disability.

- Credit Scoring: Financial institutions explore non-traditional data (shopping history, social media activity, typing speed) to assess creditworthiness. This creates significant risk of discriminatory outcomes, as these behavioral proxies can correlate with protected characteristics like race or ethnicity. This can result in digital redlining, where marginalized communities are unfairly denied credit based on opaque, biased assessments.

- Employment: Employers use AI and sensor data in hiring and management. Algorithms score video interviews (tone, facial expressions), while workplace sensors monitor productivity. These systems can perpetuate existing biases, discriminating against candidates outside a narrow, data-defined model and creating a work environment of constant surveillance harmful to well-being.

A crucial social consequence is that algorithmic discrimination is often invisible to both perpetrator and victim. This challenges existing anti-discrimination laws. Traditional laws were designed for overt bias in human decision-making. An algorithm can produce a discriminatory outcome without explicitly using a protected category like race. It can use thousands of seemingly neutral data points (zip code, purchasing habits) that function as proxies. A company deploying such an algorithm may have no intent to discriminate; it is just using a tool promising accuracy. An individual denied a loan, job, or policy may never know the reasons, let alone that it was a biased algorithm. This creates a profound accountability gap. Our legal frameworks are ill-equipped to challenge the complex statistical correlations that lead to discriminatory outcomes, allowing society to entrench historical inequalities under a veneer of mathematical objectivity.

Navigating the Contours of Freedom

The pervasive ecosystem of ambient data collection functions as a decentralized, algorithmic panopticon. This modern surveillance apparatus has evolved beyond Bentham’s architectural model, exceeding Foucault’s disciplinary society. It has ushered in a new paradigm of predictive control and automated behavioral modification. The social consequences are profound: a significant psychological burden, systemic erosion of autonomy through corporate nudging, entrenchment of historical biases through governmental surveillance, and new, algorithmically-driven forms of social stratification.

In this paradigm, power is more diffuse, automated, and opaque. If the panoptic machine is disindividualized and operates automatically, resistance becomes complex. Meaningful opposition cannot target a single inspector but must target the system’s underlying architecture. A path forward requires a multi-pronged approach: algorithmic transparency, clear data ownership rights, and robust regulatory frameworks (like the EU’s AI Act) that prioritize human rights. Equally important is cultivating critical digital literacy, fostering a public aware of surveillance mechanisms and empowered to demand accountability.

The trajectory of technology is not deterministic. The “smart” future is a landscape of choices. By using the frameworks of Bentham and Foucault to understand the Ambient Panopticon, society is better equipped to debate the kind of world it wishes to build. The challenge lies in harnessing technology’s benefits without sacrificing the fundamental human values of privacy, autonomy, and justice. Reclaiming agency in a world that is always watching requires not rejecting technology, but a conscious, collective effort to subordinate its logic to our most cherished principles.

References

[1] What is Ambient Intelligence? – Arm®, https://www.arm.com/glossary/ambient-intelligence

[2] Understanding Ambient Intelligence: What Is It & How Does It Work? | by Eastgate Software, https://medium.com/@eastgate/understanding-ambient-intelligence-what-is-it-how-does-it-work-83d55f8b1c0b

[3] What is Ambient Computing? – AI, Data & Analytics Network, https://www.aidataanalytics.network/business-intelligence/articles/what-is-ambient-computing

[4] Internet of things – Wikipedia, https://en.wikipedia.org/wiki/Internet_of_things

[5] Ambient intelligence – Wikipedia, https://en.wikipedia.org/wiki/Ambient_intelligence

[6] What Is the Internet of Things (IoT)? With Examples | Coursera, https://www.coursera.org/articles/internet-of-things

[7] Panopticon | Research Starters | EBSCO Research, https://www.ebsco.com/research-starters/history/panopticon

[8] Understanding Foucault’s Theory of Power and Control in … – Medium, https://medium.com/@asteria1881/understanding-foucaults-theory-of-power-and-control-in-discipline-and-punish-5c1da9008a5e

[9] Ethics Explainer: The Panopticon, https://ethics.org.au/ethics-explainer-panopticon-what-is-the-panopticon-effect/

[10] Internalized Authority and the Prison of the Mind: Bentham and Foucault’s Panopticon, https://www.brown.edu/Departments/Joukowsky_Institute/courses/13things/7121.html

[11] Foucault-Panopticism.pdf, https://bgsp.edu/app/uploads/2014/12/Foucault-Panopticism.pdf

[12] Panopticon – Wikipedia, https://en.wikipedia.org/wiki/Panopticon

[13] Panopticon States and the Hawthorne Effect – Eye Am Watching – The Innovation Show, https://theinnovationshow.io/panopticon-states-and-the-hawthorne-effect-eye-am-watching/

[14] Discipline and Punish Panopticism Summary & Analysis – SparkNotes, https://www.sparknotes.com/philosophy/disciplinepunish/section7/

[15] The Physics of Power: Stories of Panopticism at Two Levels of the School System, https://traue.commons.gc.cuny.edu/the-physics-of-power-stories-of-panopticism-at-two-levels-of-the-school-system/

[16] The panopticon effect: How best to handle surveillance – Big Think, https://bigthink.com/business/the-panopticon-effect-how-best-to-handle-surveillance/

[17] Foucault, panopticism and digital power – The Dead Philosophers’ Guide to New Technology, https://deadphilosophers.wordpress.com/2018/08/10/foucault-panopticism-and-digital-power/

[18] Ambient Intelligence: Examples & Benefits in Healthcare – Heidi Health, https://www.heidihealth.com/blog/ambient-intelligence

[19] 13 Examples of IoT and Big Data – Built In, https://builtin.com/articles/iot-big-data-analytics-examples

[20] 40 IoT Applications & Use Cases with Real-Life Examples – Research AIMultiple, https://research.aimultiple.com/iot-applications/

[21] Decentralization of the Panopticon, https://scalar.usc.edu/works/working-title-critical-theory-book-ccu-2017/decentralization-of-the-panopticon

[22] An IoT Surveillance System Based on a Decentralised Architecture …, https://pmc.ncbi.nlm.nih.gov/articles/PMC6471351/

[23] The Digital Panopticon: How Social Media Mirrors Bentham’s Watchtower | by Joel Wong, https://medium.com/@joel_wong/the-digital-panopticon-how-social-media-mirrors-benthams-watchtower-1c05df2b26b8

[24] Foucault and social media: life in a virtual panopticon | Philosophy …, https://philosophyforchange.wordpress.com/2012/06/21/foucault-and-social-media-life-in-a-virtual-panopticon/

[25] The architecture of IoT – InfiSIM, https://infisim.com/blog/architecture-of-iot

[26] Is AI the ultimate Panopticon? Is it possible for AI to be neutral? Or is …, https://www.researchgate.net/post/Is_AI_the_ultimate_Panopticon_Is_it_possible_for_AI_to_be_neutral_Or_is_AI_a_form_of_Governance_GovernMENTALITY

[27] Full article: Revisiting Foucault’s panopticon: how does AI surveillance transform educational norms? – Taylor & Francis Online, https://www.tandfonline.com/doi/full/10.1080/01425692.2025.2501118

[28] Rethinking Privacy In The Age Of Social Media Surveillance | Rock …, https://www.rockandart.org/privacy-social-media-surveillance/

[29] The Lawless Land of Social Media: A Proposal of Synopticism as a Product of Panopticism Emma East – Alpha Chi, https://alphachihonor.org/headquarters/files/Website%20Files/Aletheia/Volume_6_2/East_EAX0293.pdf

[30] The Smart Panopticon-opolis – Smart Cities Dive, https://www.smartcitiesdive.com/ex/sustainablecitiescollective/smart-panopticopolis/28454/

[31] (PDF) Michel Foucault, Panopticism, and Social Media – ResearchGate, https://www.researchgate.net/publication/328887158_Michel_Foucault_Panopticism_and_Social_Media

[32] The Negative Psychological Effects of Information Overload, https://bcpublication.org/index.php/EP/article/download/4692/4562/4519

[33] Data Anxiety: The Impact of Information Overload – Hospitality Insights, https://hospitalityinsights.ehl.edu/data-anxiety

[34] Survey finds constantly checking electronic devices linked to significant stress – American Psychological Association, https://www.apa.org/news/press/releases/2017/02/checking-devices

[35] How Does Data Collection Affect Our Well-Being? → Question, https://lifestyle.sustainability-directory.com/question/how-does-data-collection-affect-our-well-being/

[36] Exploring the Impact of Security Technologies on Mental Health: A Comprehensive Review, https://pmc.ncbi.nlm.nih.gov/articles/PMC10918303/

[37] Digital Surveillance and Democracy: Navigating the Challenges of the Digital Age, https://impactpolicies.org/news/594/digital-surveillance-and-democracy-navigating-the-challenges-of-the-digital-age

[38] Censorship and Surveillance in the Digital Age: The Technological Challenges for Academics – ResearchGate, https://www.researchgate.net/publication/310464517_Censorship_and_Surveillance_in_the_Digital_Age_The_Technological_Challenges_for_Academics

[39] Internet of Things and Privacy – Issues and Challenges – Office of …, https://ovic.vic.gov.au/privacy/resources-for-organisations/internet-of-things-and-privacy-issues-and-challenges/

[40] Privacy and the Internet of Things: Emerging Frameworks for Policy and Design – CLTC UC Berkeley Center for Long-Term Cybersecurity, https://cltc.berkeley.edu/iotprivacy/

[41] IoT Privacy Brief_20190912_Final-EN – Internet Society, https://www.internetsociety.org/wp-content/uploads/2019/09/IoT-Privacy-Brief_20190912_Final-EN.pdf

[42] Data privacy and the Internet of Things | UNESCO Inclusive Policy Lab, https://www.google.com/search?q=https://en.unesco.org/inclusivepolicylab/analytics/data-privacy-and-internet-of-things

[43] Beyond IoT: How the Internet of Behavior is Transforming … – Infosys, https://www.infosys.com/services/incubating-emerging-technologies/documents/transforming-human-experiences.pdf

[44] Practice Innovations: Will the Internet of Behavior change how we act and interact?, https://www.thomsonreuters.com/en-us/posts/legal/practice-innovations-january-2022-internet-of-behavior/

[45] The Internet of Things: A Landmark Technology for Behavior Change? – RGA, https://www.rgare.com/knowledge-center/article/the-internet-of-things-a-landmark-technology-for-behavior-change

[46] The Internet of Things: A Landmark Technology for Behavior Change? – BehavioralEconomics.com, https://www.behavioraleconomics.com/the-internet-of-things-a-landmark-technology-for-behavior-change/

[47] A Comprehensive Review of Behavior Change Techniques in Wearables and IoT: Implications for Health and Well-Being – ResearchGate, https://www.researchgate.net/publication/379739195_A_Comprehensive_Review_of_Behavior_Change_Techniques_in_Wearables_and_IoT_Implications_for_Health_and_Well-Being

[48] Why Does DataCollection Impact Mental Well-Being? → Question, https://lifestyle.sustainability-directory.com/question/why-does-data-collection-impact-mental-well-being/

[49] Ambient Data – reelyActive, https://www.reelyactive.com/ambient-data/

[50] Reaching and Retaining Consumers – How IoT is Enabling Target marketing and Personalisation, https://www.iottechexpo.com/2020/02/iot/iot-reaching-and-retaining-customers/

[51] IoT is Changing Advertising: Here’s How to Get in the Game – Basis Technologies, https://basis.com/blog/iot-changing-advertising

[52] IoT Data Analytics: Shaping Brand Engagement in the Digital Age – Emeritus, https://emeritus.org/blog/iot-data-analytics-use/

[53] How IoT is Changing Advertising: Benefits and Use-Cases | Cogniteq, https://www.cogniteq.com/blog/how-iot-changing-advertising-new-possibilities-and-top-use-cases

[54] How The Internet Of Things Will Drive Marketing Forward – Forbes, https://www.forbes.com/councils/forbesagencycouncil/2024/07/11/how-the-internet-of-things-will-drive-marketing-forward/

[55] Leveraging IT for Smarter, Modernized Policing | Sourcepass GOV, https://blog.sourcepassgov.com/sourcepass-gov/leveraging-it-for-smarter-modernized-policing

[56] Surveillance and Predictive Policing Through AI – Deloitte, https://www.deloitte.com/za/en/Industries/government-public/perspectives/urban-future-with-a-purpose/surveillance-and-predictive-policing-through-ai.html

[57] The Impact of IoT on Public Safety in Smart Cities – Trigyn Technologies, https://www.trigyn.com/insights/impact-iot-public-safety-smart-cities

[58] Using Artificial Intelligence to Address Criminal Justice Needs, https://nij.ojp.gov/topics/articles/using-artificial-intelligence-address-criminal-justice-needs

[59] Untitled – NAACP, https://naacp.org/sites/default/files/documents/CHOPE_AI.pdf

[60] Statement of Concern About Predictive Policing by ACLU and 16 Civil Rights Privacy, Racial Justice, and Technology Organizations, https://www.aclu.org/documents/statement-concern-about-predictive-policing-aclu-and-16-civil-rights-privacy-racial-justice

[61] Ethical Considerations of Using AI for Predictive Policing and Surveillance – ResearchGate, https://www.researchgate.net/publication/394480144_Ethical_Considerations_of_Using_AI_for_Predictive_Policing_and_Surveillance

[62] Artificial Intelligence in Predictive Policing Issue Brief – NAACP, https://naacp.org/resources/artificial-intelligence-predictive-policing-issue-brief

[63] AI Generated Police Reports Raise Concerns Around Transparency, Bias | ACLU, https://www.aclu.org/news/privacy-technology/ai-generated-police-reports-raise-concerns-around-transparency-bias

[64] Discrimination Aware Data Mining in Internet of Things (IoT), https://www.ijcaonline.org/archives/volume159/number3/gorave-2017-ijca-912894.pdf

[65] The Big Data World: Benefits, Threats and Ethical Challenges – Emerald Publishing, https://www.emerald.com/books/oa-edited-volume/10727/chapter/80340953/The-Big-Data-World-Benefits-Threats-and-Ethical

[66] REGULATING THE INTERNET OF THINGS: FIRST STEPS TOWARDS MANAGING DISCRIMINATION, PRIVACY, SECURITY & CONSENT By Scott R. Peppet – National Telecommunications and Information Administration, https://www.ntia.gov/files/ntia/publications/ssrn-id2409074.pdf

[67] AI, insurance, discrimination and unfair differentiation: an overview and research agenda, https://www.tandfonline.com/doi/abs/10.1080/17579961.2025.2469348

[68] Discrimination: Considerations for Machine Learning, AI Models, and Underlying Data – American Academy of Actuaries, https://www.actuary.org/sites/default/files/2023-08/risk-brief-discrimination.pdf

[69] JIR Article – AI-Enabled Underwriting Brings New Challenges for Life Insurance: Policy and Regulatory Considerations – NAIC, https://content.naic.org/sites/default/files/JIR-ZA-40-08-EL.pdf

[70] Big Data: A tool for inclusion or exclusion? Understanding the issues (FTC Report), https://www.ftc.gov/system/files/documents/reports/big-data-tool-inclusion-or-exclusion-understanding-issues/160106big-data-rpt.pdf

[71] Big Data and Discrimination | The University of Chicago Law Review, https://lawreview.uchicago.edu/print-archive/big-data-and-discrimination

[72] Big Data & Issues & Opportunities: Discrimination – Bird & Bird, https://www.twobirds.com/en/insights/2019/global/big-data-and-issues-and-opportunities-discrimination

[73] Data and Algorithms at Work: The Case for Worker Technology Rights, https://laborcenter.berkeley.edu/data-algorithms-at-work/

[74] Algorithmic discrimination: examining its types and regulatory measures with emphasis on US legal practices – PMC, https://pmc.ncbi.nlm.nih.gov/articles/PMC11148221/

[75] Big Data: A Report on Algorithmic Systems, Opportunity, and Civil Rights – Obama White House, https://obamawhitehouse.archives.gov/sites/default/files/microsites/ostp/2016_0504_data_discrimination.pdf